Earlier this year, I did a webinar for the APNIC Academy on Learning from Honeypots. In the presentation, I shared some insights from our Community Honeynet Project, and information about the infrastructure and data collected. Since then, I have received some questions about the tools we use to run the community project.

In this post, I will highlight the main tools we have set up to run the project with our community partners. It is not meant to be a comprehensive list, but hopefully it will give some ideas on the amount of effort required to run a similar project. At the same time, I think this will be a good opportunity to acknowledge and give thanks to the various free and open source projects that we have benefited from.

Community Honeynet Network (CHN)

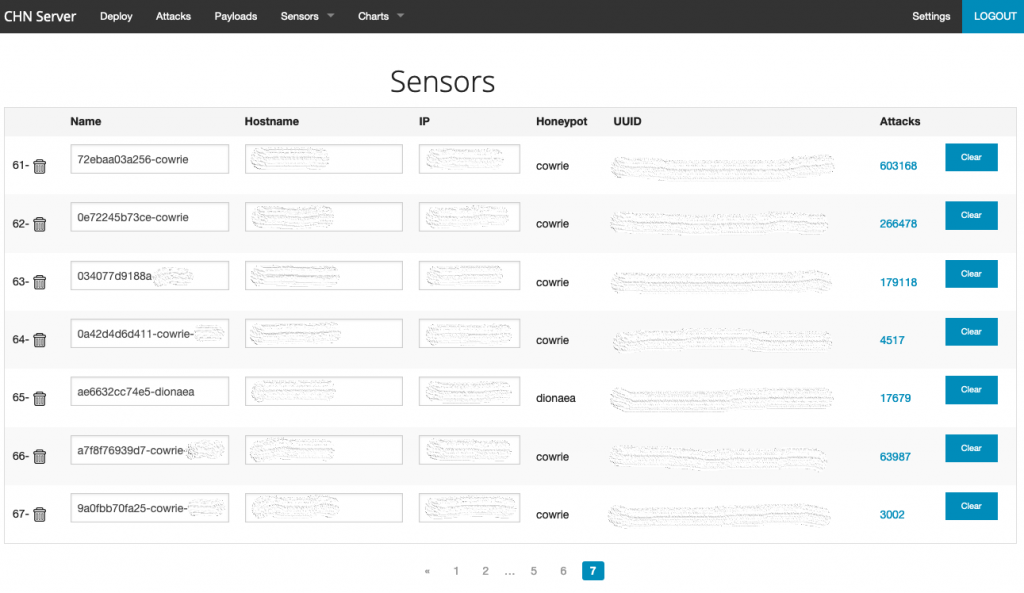

When we started the project a couple of years ago we used a tool called the Modern Honey Network (MHN). In a nutshell, MHN helps make the process of deploying a distributed honeynet easier. It consists of tools and scripts that enable different types of honeypots to be deployed, facilitate collection of logs and provide basic visualization. Last year, we started to use the Community Honey Network (CHN), which is an element of MHN but with new features and great documentation. The developers are also quite responsive on Github.

Read more: Managing Honeypots with MHN

CHN basically allows you to choose different types of honeypots for deployment. Some examples are Cowrie, Dionaea, and RDPHoney. It is worth noting that they are basically open-source tools that are maintained separately.

ELK Stack

Deploying honeypots is half of the story. Doing something useful with the artefacts collected is probably the other half. Both CHN and MHN facilitate the collection of logs from the distributed honeypots using a tool called hpfeeds. These logs can be converted to json, which can then be consumed by the various components in the ELK stack. For instance, we make use of Logstash to do basic enrichments, and Filebeats to get certain logs (that is, Suricata) shipped to our Logstash and Elasticsearch cluster. And of course, we use Kibana for visualization and ‘story-telling’.

Elastalert

With the data stored and searchable in Elasticsearch, we use Elastalert for sending notifications to other platforms we use such as Slack or email. This saves us time from having to log into Kibana every time we need to search for something. Basically with Elastalert, you can build rules that get ‘triggered’ when certain conditions are met and be alerted.

Suricata

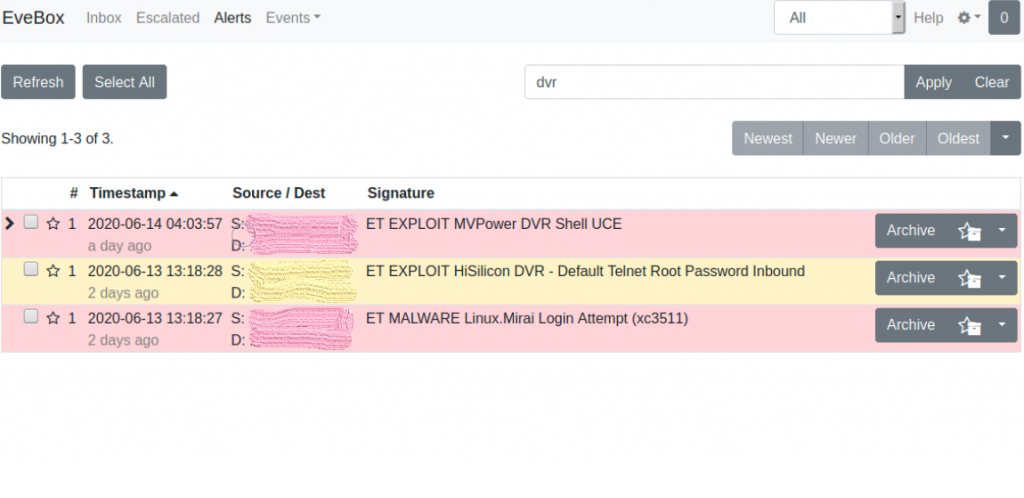

The logs from the various honeypots we deployed are normally sufficient for analysis or taking further action. We use Suricata, a high performance network intrusion detection system, intrusion prevention system and network security monitoring engine, to provide additional context. Basically, this means that we can get additional information from the alert signatures and other network-related information. Getting Suricata logs to the Elasticsearch cluster was done with Filebeats. In fact, Filebeats has a Suricata module that makes this process very straightforward.

Speaking of Suricata logs (eve.json), I must also mention that for some of our training we use Evebox for analysis. It is also part of a toolkit called SELKS.

TheHive and Cortex

The tools mentioned so far are core to the distributed community honeynet project. However, we have additional tools that help us keep track of things and perform analysis. TheHive basically allows us to manage ‘cases’ or incidents. We can then include other information such as relevant observables (that is, IP address or domain names), notes, and other information. Furthermore, the Cortex (aka the brain) allows us to make queries to different databases on what is known about a particular observable. All of this will give additional context to what we are seeing or dealing with. Most importantly, I have my notes in a single location.

I know that I am not doing justice to TheHive and Cortex here with this short description — they have other useful capabilities such as MISP integration.

Epilogue

I have shared some of the tools we deploy for the community honeynet project. I think it is important to note the value of these open source projects. They not only help enable the initiative but also contribute to the overall security of the ecosystem. Please consider supporting the projects in any way you can!

The views expressed by the authors of this blog are their own and do not necessarily reflect the views of APNIC. Please note a Code of Conduct applies to this blog.