It’s a warm summer evening, the kind that’s too nice to be stuck in the kitchen cooking a meal. As the system operator of a food delivery app, you expect to receive a lot of orders, however, some of your customers are complaining about a system error with your app. You quickly open up a ticket with your cloud operator — many applications today are hosted in containers in virtual machines (VMs) in public data centre clouds — as the health monitor on your end looks good.

While the data centre reliability engineers are diagnosing the problem, they start to receive similar service disruption tickets from other customers. Checking the logs, they find that a process called vswitchd restarted. Their system report script did not alert them with anything else, so they close the tickets and credit everyone for the brief outage, as per their service level agreement with their customers.

Over the following months, vswitchd restarts on only a few more servers, within the bounds of the configured thresholds of the host servers’ machine-learning parameters, thereby bypassing the cloud operators intrusion detection system. In the following months, sensitive private data is leaked to the public, which leads to a flurry of legal, political and economic issues for the cloud operator and all the affected customers.

Virtual networks in data centres

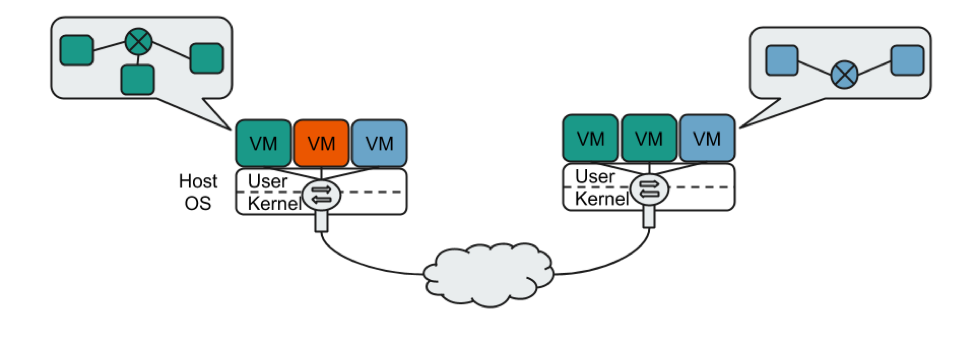

The process vswitchd, that restarted in the above scenario, is a virtual switch. It is an important piece of software that resides in the host operating system that virtualizes the network of VMs. It has two main functions:

- To inter-connect all your VMs in the various servers in the data centre.

- To isolate your network of VMs from other customers/tenants.

Figure 1 — A high-level view of virtual networking in the cloud. The green and blue VMs are connected in their own networks and are isolated from each other.

With more people migrating their applications to the cloud, we have introduced more functionality into the virtual switch, for example, firewalls, network address translation and load-balancing (check out the Azure and GCP talks at NSDI’18). This is a double-edged sword. On the one hand it increases the performance (throughput and latency) by performing the many network functions in the virtual switch. On the other hand, it increases the complexity of virtual switches, which in turn, increases the chance of introducing a security vulnerability.

How to attack virtual networks

Introducing new network applications into the virtual switch usually involves writing network protocol parsers, which is non-trivial and prone to error. This makes virtual switches an excellent target for discovering vulnerabilities, especially the parsing logic, using a fuzzer such as the much-loved and well-respected american fuzzy lop (AFL).

We at the Technische Universität of Berlin, in collaboration with TU Delft, Max-Planck-Institut für Informatik and the University of Vienna, trialled this hypothesis for a paper we presented at ACM SOSR last year (paper, slides), and to our good fortune, found a few vulnerabilities in a popular virtual switch Open vSwitch (OvS), two of which were reported in CVE-2016-2074.

In this post, I will describe some of the take home messages from our project, as well as research that has spawned from this.

Taking control

We embedded the exploit — which allowed us to control the operating system running OvS with root privileges — in the vulnerable MPLS label stack parsing logic of OvS. The vulnerability was present because packet parsing in virtual switches and Software Defined Networks may be optimized for performance rather than following standards, for example RFC 3032 for parsing the MPLS label stack states that forwarding decisions are to be based on the top label, however, OvS attempted to parse all the labels and other network layer headers as well.

In cloud systems such as OpenStack, Microsoft Azure and Google Cloud Platform, this means that if we have access to a VM in the cloud (we can affordably rent one), we can send a specially crafted packet that is processed by the virtual switch that results in us getting privileged control of the host (recall that the virtual switch is in the host and by default runs with high privileges). We can then patch the virtual switch software and spread to other servers in the data centre like a worm.

However, attacking the centralized controller as the next step would give us access and control to all other servers, therefore, attempting to compromise the server hosting the controllers would be our next target and from then on, all the other servers. Our measurements and estimations with OpenStack revealed that in a matter of a few minutes, a data centre with hundreds of servers can be easily compromised.

How we can prevent such attacks

Having shown what was possible, we’ve turned our attention to ensuring that virtual switches and the way they are currently deployed in cloud architectures do not fall victim to what I described above. To do this, we’ve used compile-time software protection mechanisms such as stack canaries and position independent executables (PIE). Yes, this has meant compiling and installing the virtual switch software from the source rather than a package manager.

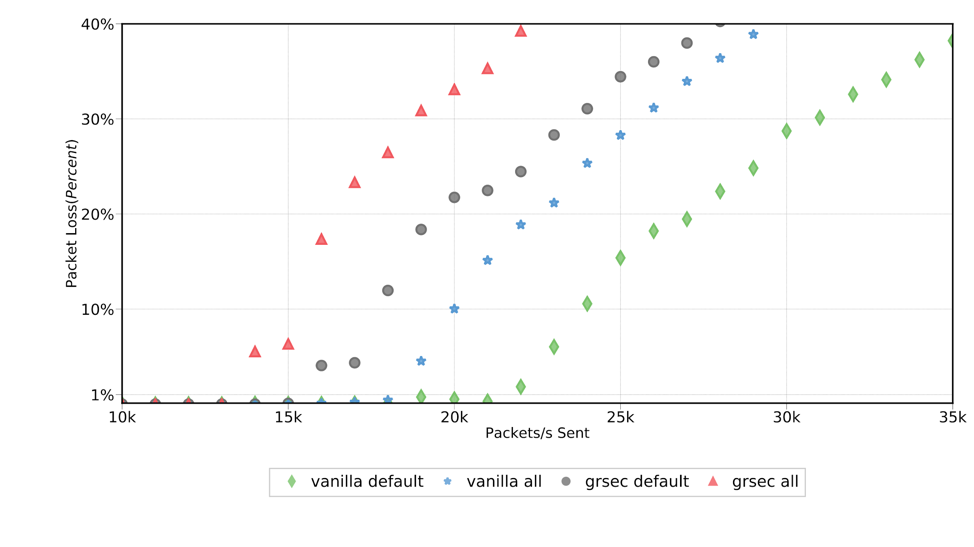

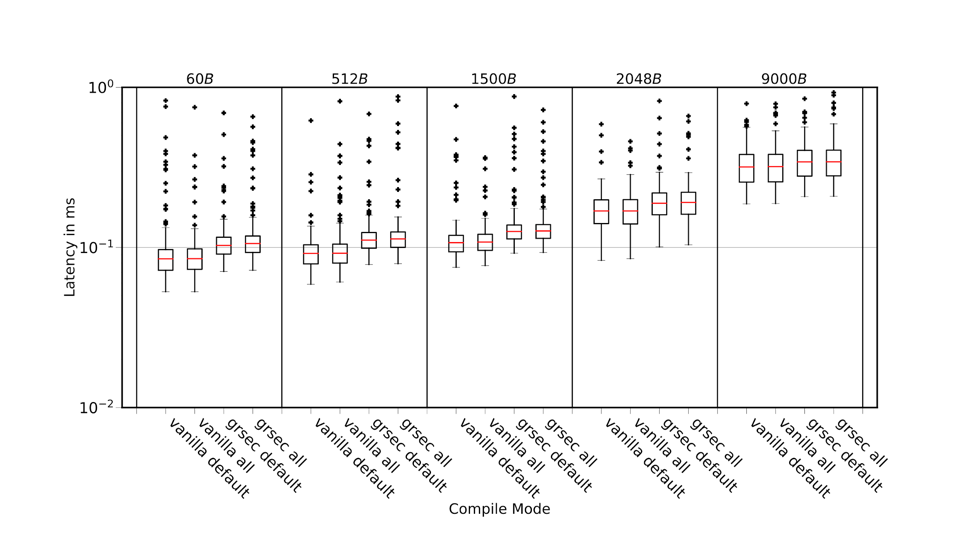

We then measured the impact of those two countermeasures on the forwarding performance of OvS. Our experiments indicated that these two countermeasures imposed a small penalty on the throughput (as shown in Figure 2 with the green diamond and blue star) and a negligible hit on the latency (shown in Figures 3 as vanilla all) on the user-space packet processing of OvS, which is where the vulnerabilities were found. Therefore, they should be used in virtual switches to prevent attackers from exploiting buffer overflows in packet parsing.

Figure 2 — The forwarding throughput comparison of OvS’s slow path with and without the compile-time software protection mechanisms.

Figure 3 — The forwarding latency comparison of OvS’s slow path with and without the compile-time software protection mechanisms.

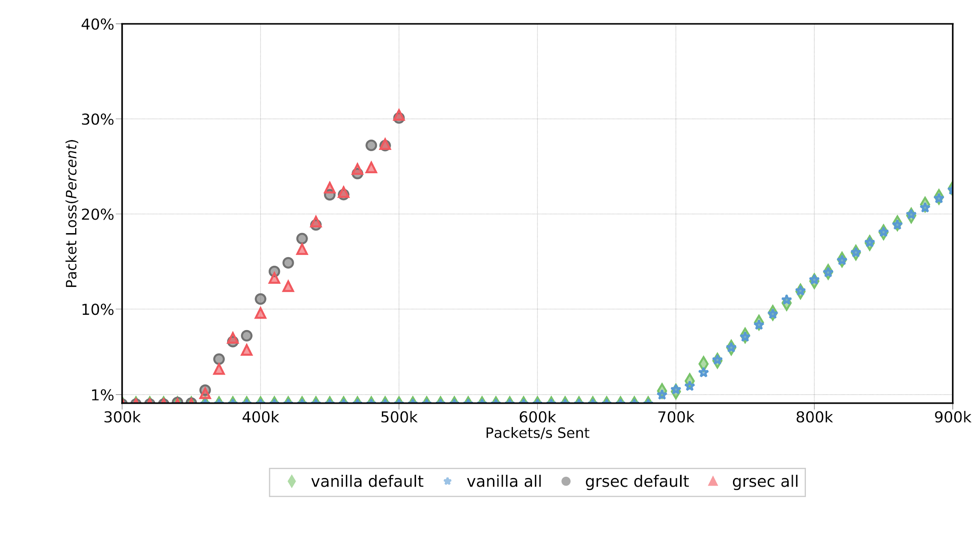

OvS and other virtual switches also process packets in the kernel (fast path) for low latency and high throughput. Our measurements on protecting the fast path using the grsec linux kernel patch revealed that there is a considerable impact on the throughput (the grsec patches reduce the maximum throughput by a factor of 2) as shown in Figure 4. This makes it (grsec patches) unlikely to be used in production environments where high forwarding performance is required.

Figure 4 — The forwarding throughput comparison of OvS’s fast path with and without the compile-time software protection mechanisms.

What next

We are currently working on designing virtual switches with security and performance based on secure design principles. The key idea to increase security is twofold.

- First, the virtual switch is isolated from the host by running it in isolation, for example, in a VM.

- Second, we create multiple virtual switches to maintain virtual network isolation even when one virtual switch is compromised.

For high performance, we are considering NIC virtualization techniques such as Single-Root IO Virtualization and/or user-space packet processing such as OvS with Intel’s DPDK. We believe we have promising results to share in the coming months.

Have you had your VM comprised? How did you / your data centre deal with it? Comment below.

Kashyap Thimmaraju is a network security researcher at the Technical University of Berlin.

The views expressed by the authors of this blog are their own and do not necessarily reflect the views of APNIC. Please note a Code of Conduct applies to this blog.