On 14 September 2023, I received a call from a transit provider (AS17439) in a location far from where I actually operate. They were concerned that I was potentially leaking and/or hijacking a route (103.11.84.0/24) that originates from one of their downstream BGP customers (AS132052), through my AS149794, based on RIPE’s BGPlay data.

However, after investigating the issue myself using various tools, I determined that they had misinterpreted the data from BGPlay. In this article, I will explain what actually happened and why it is important for network managers, administrators, and engineers to have a good understanding of how to read BGP data correctly.

Context

I have peered my Autonomous System Number (ASN) with RIPE’s RIS, whereby I export the full routing table from my point of view to RIPE for both IPv4 and IPv6. This gives BGPlay full access to routing information from my ASN.

BGPlay is a tool that allows network operators to view the routing tables of other networks. This can be useful for troubleshooting routing problems and for understanding how traffic is flowing across the Internet.

How to avoid BGP false alarms

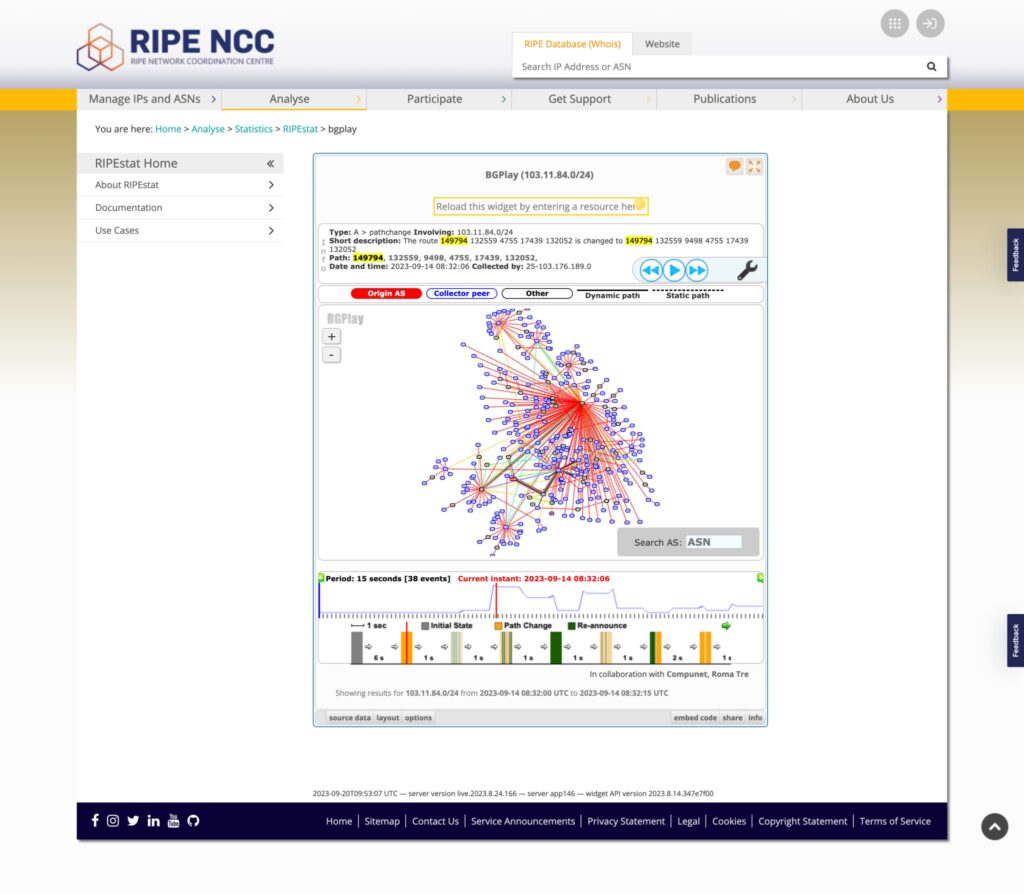

In this harmless incident, the staff of the transit provider saw the BGP data for 103.11.84.0/24 from my ASN’s point of view on BGPlay for a very specific time (8:32:06 on 14 September 2023), though I am unsure of the timezone used by BGPlay.

The AS-PATH of the route showed my ASN on the left and the origin (AS132052) on the right. This shows that I learnt the route directly from AS132559, my upstream transit provider, moving from left to right in the AS-PATH.

The transit provider in question misinterpreted this data to mean that I was either hijacking their prefix and/or leaking it. However, this is not the case. What we are seeing here is a simple route output from my ASN towards RIPE RIS, which shows that the path on my end towards this specific prefix changed from ‘149794 132559 4755 17439 132052′ to ‘149794 132559 9498 4755 17439 132052′.

During my conversation with the transit provider in question who called me, I pointed them to other tools for confirming the situation. The following are the tools I used myself:

- Cloudflare Radar.

- bgp.tools’ super looking glass.

- Some data output from Kentik — shout out to Doug Madory and Wilhelm Schonfeldt who were kind enough to support me with some data output from Kentik’s platform.

Conclusion

This is a common mistake that network operators make, especially those who are not familiar with how to read BGP data correctly. It is important to remember that the AS-PATH simply shows the path that a route has taken from the origin AS to the current ASN and vice versa.

In the case of a route hijack, it is easy to spot the event by looking at the right-most ASN in the AS-PATH. The right-most ASN will be the origin AS for said route and one can determine if this ASN is valid or invalid by checking the route object and/or RPKI ROA. However, there can also be a case of a route hijack via ASN hijacking.

In the case of a route leak, the leak can occur anywhere between any ASN in the AS-PATH, especially in provider-to-provider ASN relationships. Detecting a route leak is not as straightforward, but it can be done by using a myriad of publicly available tools such as looking glasses and the tools I linked above.

In this particular incident, the false alarm was harmless. However, it is essential to be aware of the potential for misinterpreting BGP data, as this can lead to unnecessary and costly troubleshooting exercises.

Network operators should educate themselves on how to read BGP data correctly. There are many resources available online and in books, and there are also many experienced network operators who are willing to share their knowledge.

It would be appreciated if you could help me continue to provide valuable network engineering content by supporting my non-profit solitary efforts. Your donation will help me conduct valuable experiments. Click here to donate.

Daryll Swer is an independent network consultant with a deep passion for computer networking. Specializing in IPv6 deployment, he provides expert guidance to ISPs, data centres, and businesses looking to optimize their networks. With ongoing research (AS149794) and practical insights, he empowers professionals to harness the full potential of IPv6 and improve their network performance and scalability.

Adapted from the original at Daryll’s Blog.

The views expressed by the authors of this blog are their own and do not necessarily reflect the views of APNIC. Please note a Code of Conduct applies to this blog.

Dear Daryll,

thanks for sharing. Considering that originally BGP did not have monitoring capabilities built in and the routing table need to be exported by peering to another BGP daemon where BGP attribute modifications such as AS_PATH could not be prevented, it is understandable that a new network engineer to the Internet community gets confused.

As one of the BMP (BGP Monitoring Protocols) co-authors and working myself at a network operator, I ask myself why after 7 years being RFC and broad vendor adoption we still leave in the world of working around the problem that BGP had no monitoring capability built in which is resolved by now? What I am missing here?

Hello Thomas

I intentionally skipped certain details about this incident for public relation reasons, but I will note that the team who raised this case were not “new” or inexperienced engineers, they were folks with decades+ experience under their belt — Hence I wrote:

“However, it is essential to be aware of the potential for misinterpreting BGP data, as this can lead to unnecessary and costly troubleshooting exercises.

Network operators should educate themselves on how to read BGP data correctly.”

It may be worth giving this a read for additional context:

https://www.daryllswer.com/the-human-side-of-isps/

Regarding BMP, I think we all know the answer. It’s simply human nature to resist changes and improvements. Everybody wants change, as long as there’s no change. We have classic examples to back this up, IPv6, BCP-38 etc. A lot of network operational issues were resolved decades ago by folks like yourself, but the industry for some reason refuses to adopt those solutions and continue with whatever they’ve always done the decades prior and proceeds to continue with outages/issues and overall time wastage.

Engineering or technical solutions unfortunately cannot fix human nature, I’m afraid. Layer 8+ problems require layer 8+ expertise to combat.