In the first part of this series, we laid the foundation of network design for the edge. In this second instalment, we will look at the various high-level edge connection examples — from one connection to having multiple connections, including BGP guidance.

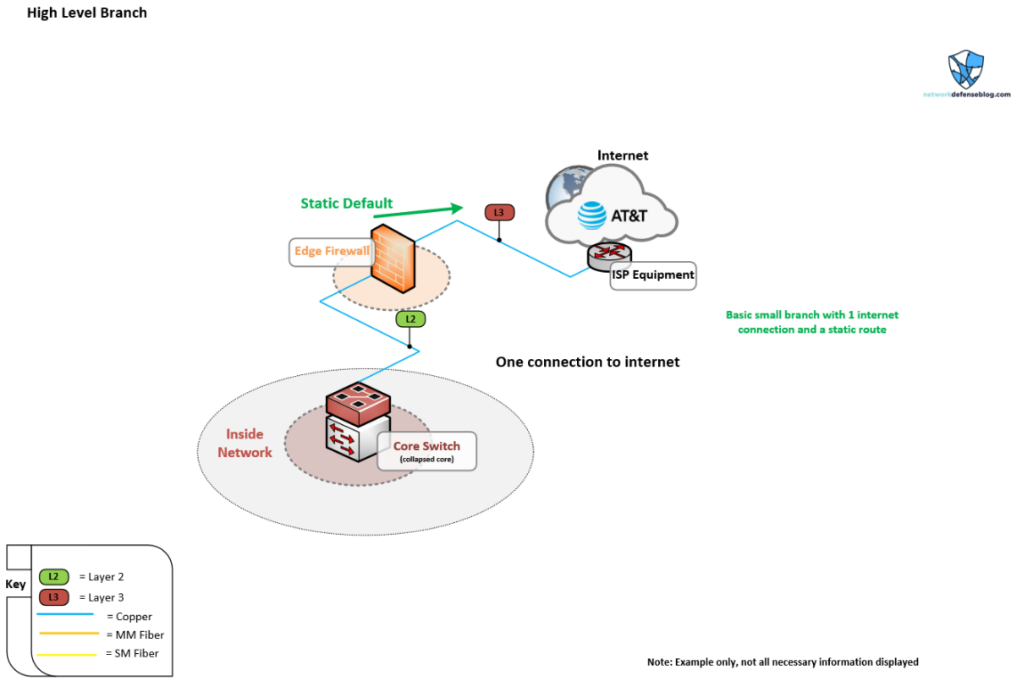

High-level topology 1 — single-homed

Figure 1 shows the bare bones of a basic single-homed setup, which would likely be a branch office or a very small business office with only one connection present. There’s the switch and firewall to the Internet using static routing with a copper handoff direct into the edge firewall. You’d probably have DHCP or a small static IP block the provider would give you to connect to the Internet. You don’t have any redundancy hardware or connections here. However, it is simple and it meets our basic requirement of secure connectivity to the Internet.

High-level topology 2 — dual-homed

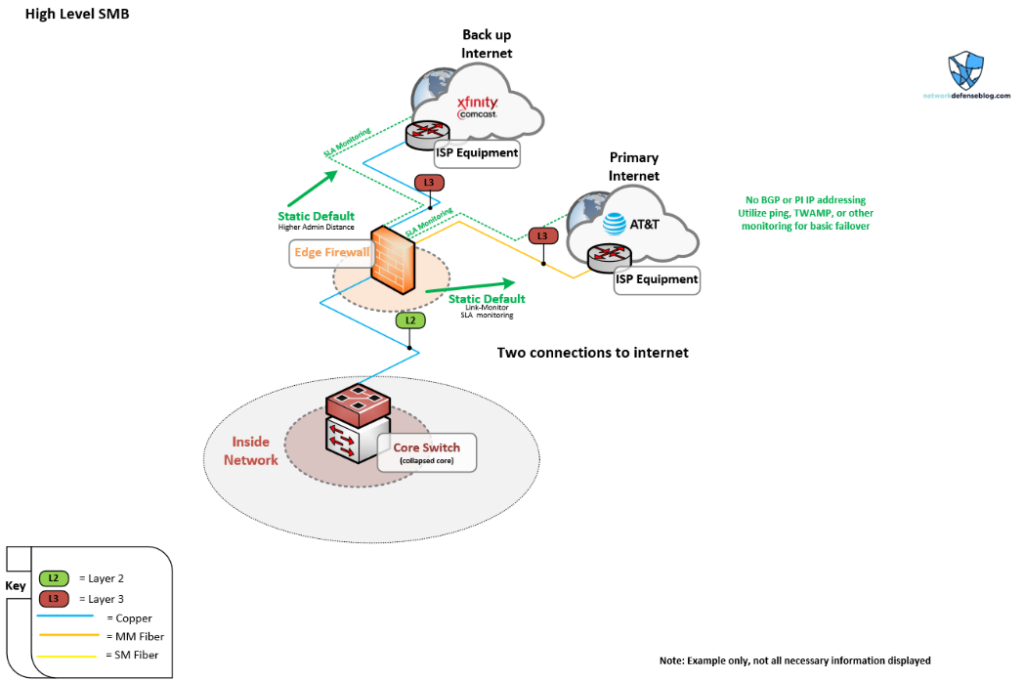

Now, let’s say a company had the same setup as above but the business has grown a bit and there was a bad Internet outage that caused some extensive downtime; consequently, management wants to get better Internet redundancy.

You might see a setup like this, which is how I assume a lot of SMB networks are established. I also know several people constantly ask about IP SLA or ping monitoring for this type of edge network.

Take note that there is a fibre connection now for the primary Internet connection to AT&T and we’ve added another backup connection from Comcast. Consider perhaps having a medium and uptime agreement when ordering for your primary connection. Fibre networks are generally newer and have better equipment running them and are preferred over coax broadband or fixed wireless networks. Fibre circuits like Dedicated Internet Access (DIA) usually have better Service Level Agreements (SLAs) and dedicated guarantees but are usually more costly.

This network would probably have two blocks of provider-assigned IP addresses and just perform Network Address Translation (NAT) out of each connection based on where traffic is exiting, which would facilitate the outside routing back to the network. If IPv6 was in the mix you’d probably want provider-independent space, but we won’t dive into that.

The ping method of monitoring a connection for failover should be discouraged when possible in my opinion (despite being often recommended and listed in guides as the go-to), however, as you can see there are limited options here.

I often think about how if 8.8.8.8 went offline how many outages and connection switches there would be as there are probably hundreds of thousands of devices depending on the uptime of that Google DNS IP address to measure connectivity.

As you can imagine, although using Internet endpoints like DNS servers to gauge the health of your connection works, it doesn’t mean that’s the best way. For example, what if there is unexpected maintenance on those servers and they go down? Or you get throttled due to a timer that is set too aggressively. You’ll get a false positive failover (which will happen).

If you are able to verify connectivity using endpoints you own, like HQ or data centre (DC) edge network devices of other locations, cloud load balancer IPs and so on, that would be better. Look at asking your provider as some might offer destinations to ping or measure against.

Moreover, you don’t necessarily just want to ping the gateway IP you are assigned either, because if that is directly connected to your edge you won’t be able to detect an upstream failure. This is why Border Gateway Protocol (BGP) is so great at the network edge because if there is an upstream failure like a circuit that is down, the advertised routes inbound and outbound for that connection will be withdrawn (due to there being no BGP connection upstream) thereby causing your network to divert to the backup circuit. Although you will want to monitor the interface state of your WAN if your device has that capability.

When using the ping measurement, you’ll have to get crafty on how you steer your internal traffic. You’d probably have to use object monitoring to withdraw the primary static default and then have the second one take over, although some devices have the route updates built into their IP monitoring.

Consider your NAT rules as well; it’s easier if both your connections are on a single firewall or high availability (HA) pair. Keep in mind if you don’t have Demilitarized Zone Network (DMZ) switches but two connections on HA firewalls, you’d have to perform an HA failover to use the secondary connection.

This becomes difficult and is why you also usually can only have the ping method on a single device when dual-homed. Having two border routers in front of your firewalls with IP SLA monitoring and object tracking for default routing is a sub-optimal design.

This section was on a ‘dual-homed’ topology where we have two connections on our single endpoint providing connection redundancy; next, we will examine the next tier of a single connection but to dual points.

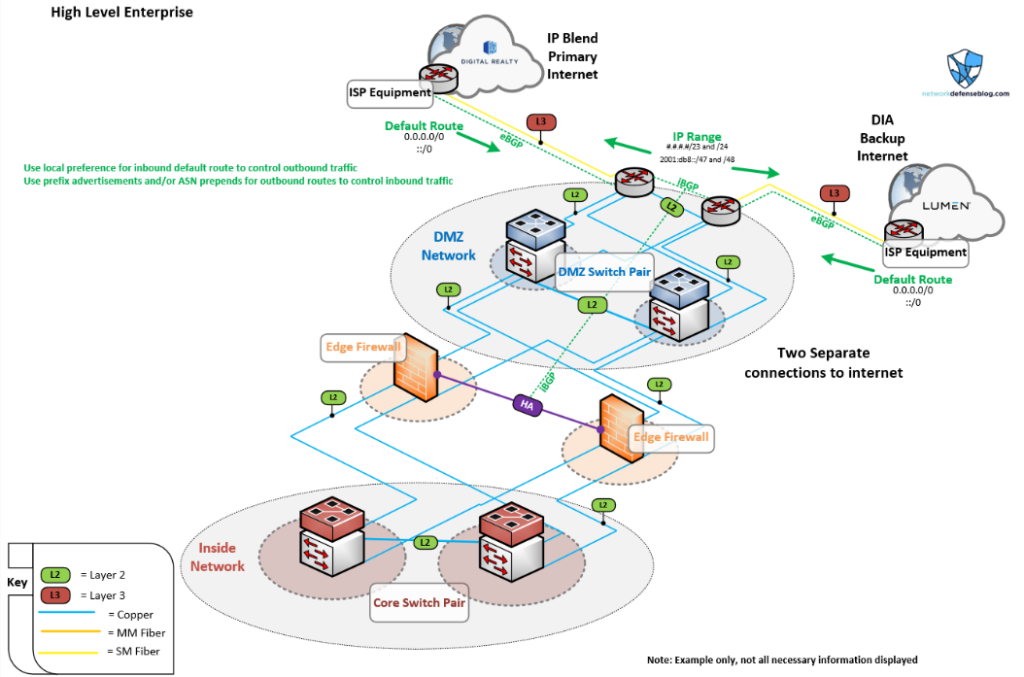

High-level topology 3 — dual single-homed

Now we’re talking, right? We’ve got an actual DMZ, edge routers, and using BGP! We have two edge routers that each have a connection to a diverse provider. Perhaps this is an enterprise that has its edge inside a DC. We can see there is a DC provider, Digital Realty IP, whose blend is being used for the primary connection, and a large provider, Lumen, offering our backup connection. This design provides full physical and logical redundancy.

We can see we are using external BGP (eBGP) to peer with the providers and announce our subnets. Reasonably we have multiple blocks within like a /47 IPv6 block and a /23 IPv4 block where we are able to advertise each connection to control the traffic. Then we configure iBGP (internal) to facilitate the communication and routing between the firewalls and the border routers. This methodology is the preferred way for communication at the network edge.

As with PING monitoring, we have less flexibility compared to BGP. In the diagram, you’ll notice we are using the BGP attribute local preference to mark inbound routes, which gives the outbound traffic a way to decide which outgoing path to go on. Furthermore, we are deciding which IP prefixes to be advertised and to which peer, via route filters, to influence inbound traffic flowing back from the Internet.

It’s important to control the inbound and outbound routing using BGP attributes and filtering as you could run into asymmetric routing due to traffic leaving one connection and returning on the other. This could be a problem for firewalls when dual-homed. However, I like the two-router design to control the perimeter and have the firewalls behind them with an outside interfaces zone; this eliminates asymmetric routing issues from a firewall perspective.

Now we can recognize another challenge listed at the beginning — NAT. Skip ahead for IPv6 only, but in this case, NAT should be used on the firewalls as they usually have more horsepower to do this and likewise offer more flexibility vs running NAT on your border routers.

In addition, consider another challenge — budget. What if you’re not running any outside services and servers or only need a few ports to connect your firewalls, ISP, and routers? That probably doesn’t justify a whole other pair of high-end switches for the DMZ. Therefore, you could use existing switches with separate VLANs only for the outside connections to connect the devices and only have those few ports with that isolated VLAN.

Additionally, I like having border routers because they usually have better buffers and options for QoS/traffic shaping, which is where it’s good to perform. Plus, they can usually have all the routing options you need compared to a firewall, which could be more limited on the BGP side. You could do without the routers and have the circuits terminate into the DMZ switches and carry the isolated VLAN to the firewalls if budget is a problem (or maybe you are running Arista switches at the edge?) — you decide.

It’s important though to have a clear delineation between internal and external networks, so sometimes just that added security (perhaps for compliance) for DMZ switches and/or routers is justified.

High-Level topology 4 — dual multi-homed

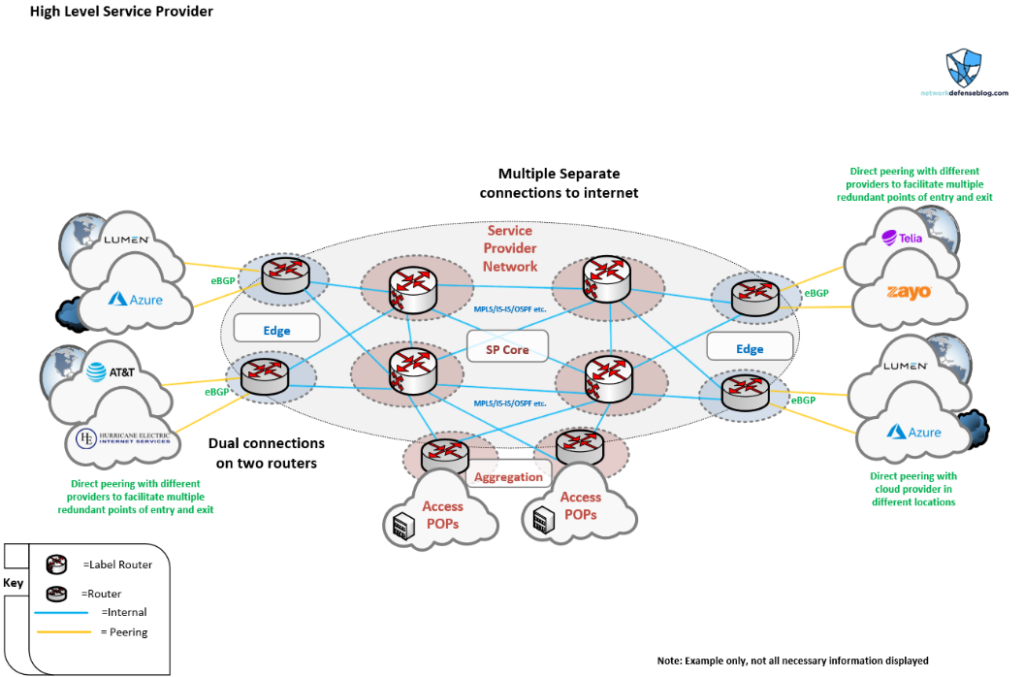

For the final high-level topology where we are looking at dual connections on dual routers (dual multi-homed), we will examine a service provider-type network.

Dissecting this topology, we can see how a Service Provider (SP) network might be divided into different sections, such as core, aggregation and, of course, the edge. The edge plays a key role for the SP, as larger SPs make money by providing transit or upstream connectivity for smaller SPs and connecting their business and residential customers to the outside world.

They further require more redundancy due to the number of users or bandwidth capacity they cover. When you support many enterprises there are potentially millions of dollars worth of traffic travelling over your circuits, so having redundancy with multiple edge connections is important.

The term active/passive is often replaced with active/active connectivity in dual multi-homed designs. There are multiple connections that are all used at once depending on where the traffic is coming from. You could have active/passive at each boundary, it just really depends on the network and if having monthly costs on unused circuits is worth it.

There will often be a multitude of paths in and out, which means the traffic has several different routing options. There are hot and cold potato routing concepts that often determine the way traffic will be handled. It’s imperative for the dual multi-homed network operator to understand the different routing paths within the existing network and how the inbound/outbound traffic flows will be affected when adding new circuits or handling failure scenarios. The behaviour should be deterministic and predictable, which can be achieved with proper BGP configuration (and MANRS).

For example, if you have a circuit or line card fail in an edge POP in a certain metro, will that affect all traffic in that region, or will the traffic flows migrate to a different path out to the Internet? It’s good to gauge failure domains at the edge. Of course, there are always some single-homed customers or areas where customers are without protection.

Looking at Figure 4, we see Azure and Lumen connections shown on each side of the diagram that could be completely different geographic regions. It would be good for the provider to have multiple paths not only for redundancy but for performance. Sometimes it’s beneficial to peer directly with some cloud providers or other SPs to get better latency/performance to their specific networks, rather than transiting other carriers, which could be more expensive. Therefore, well-constructed edges can also increase capacity and performance.

Reviewing what we listed for edge network challenges, SPs and enterprises face a lot of the same problems, but often in different ways. SPs don’t always have to worry about NAT or asymmetric routing since they accept the hot potato routing behaviour and the customer equipment NATs. However, like enterprises, the SP must delineate between different parts of their network for better control. Either way services are increasingly being brought closer to the border of the network so having high-performance edge POPs is becoming more important for the service provider.

In the next part of this series, we will look at low-level network edge design, focusing on an enterprise-oriented dual single-homed setup, covering multiple scenarios.

Brandon Hitzel (Twitter) is a network engineer who has worked in multiple industries for a number of years. He holds multiple networking and security certifications and enjoys writing about networking, cyber defence, and other related topics on his blog.

This post is adapted from the original at Network Defense Blog.

The views expressed by the authors of this blog are their own and do not necessarily reflect the views of APNIC. Please note a Code of Conduct applies to this blog.