As Internet access becomes increasingly recognized as a universal human right, it becomes increasingly important to ensure that users have reliable and effective access. However, it is surprisingly hard today for Internet users to voice their complaints in a well-defined and structural way when this right is not adequately satisfied. This limitation has led to the conventional approach of studying Internet outages remotely by analysing network signals originating from the areas of interest.

My fellow researchers and I from ETH Zürich’s Networked Systems Group argue that this approach is practical yet incomplete — network signals do not always reflect the end host’s view of the Internet. In response, we propose a complementary approach to studying Internet outages from users’ perspectives.

Traditionally, studies on Internet outages have primarily relied on two types of network signals — data traffic and control plane updates. In the first case, the remote vantage point can passively capture traffic from the area of interest or actively engage in probing to determine the connectivity of the target network and endpoints. In the second case, the vantage point can examine control plane messages originating from the target area to determine their liveness.

These techniques are helpful, but they are not always sufficient. For example, a functional mobile network may not respond to active probes by design, while an area that responds to probes may not be able to access a censored website or one that is down. Similarly, a target network may generate healthy control plane messages while having a broken data path preventing users from accessing the Internet.

Given these limitations, it is worth asking whether we can complement our unilateral view with a perspective from the target network to the Internet. In other words, can we somehow obtain the end hosts’ point of view rather than the reflections of their activity? Our study answers this question in the affirmative and presents a systematic and quantifiable methodology for studying Internet outages from the users’ perspective.

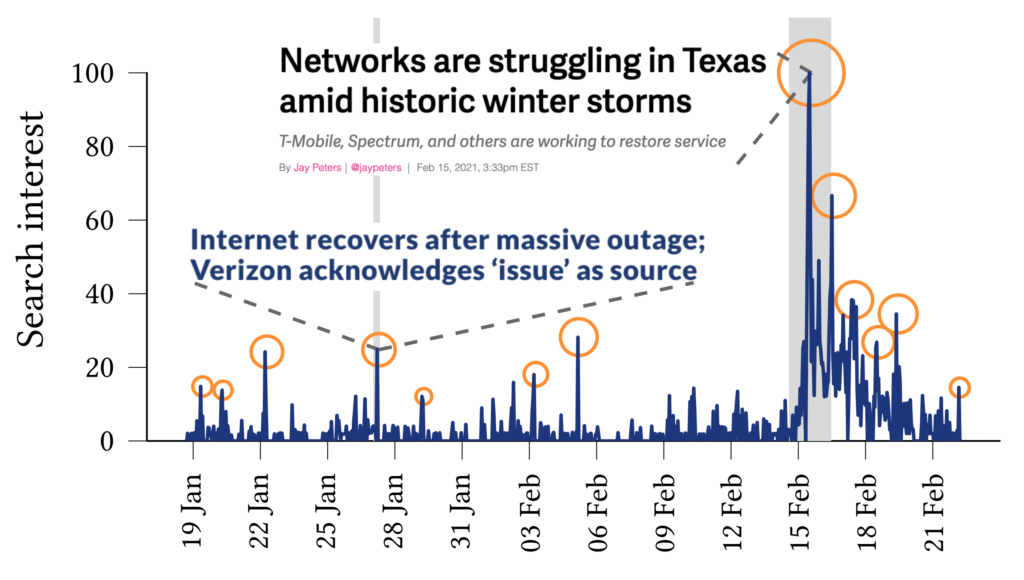

We do this using passive crowdsourcing, analysing the target geographical region’s aggregated web search activity for a specified time. More precisely, our open-source tool, SIFT, first collects partial views of aggregated search statistics from Google Trends, the aggregation service for Google search data. Then, SIFT constructs continuous timelines of user interest (or popularity index) around Internet-outage-related search activity. SIFT achieves this by tracking the <Internet outage> topic, an abstraction provided by the Google Trends service similar to a hashtag, for clustering semantically connected search queries. Finally, SIFT analyses the constructed timeline as a directional indicator for user interest around Internet outages and detects the sudden spikes in search volume to pinpoint the outage events.

Figure 1 demonstrates an example of these popularity timelines that SIFT constructs for Texas, US, for the winter of 2021. In this example, the orange circles represent news-verified spikes SIFT detects as outages. Besides detection, SIFT quantifies and analyses Internet outages over three dimensions — impact, area, and context.

First, SIFT interprets the duration of user interest as the impact of the outage. For example, in Figure 1, the two dark grey boxes representing the spike widths in two distinct outages illustrate how long users kept searching for these events. This indicator potentially suggests the severity of the outage. In our study that we conducted for the years 2020 and 2021 in the US, we discovered that around 10% of the 49,000 outages we detect sustain interest over three hours from search users.

Second, SIFT reveals the area of outages by correlating the spikes occurring over distinct geographical regions. We find that 11% of all the outages simultaneously affect at least ten distinct states. Besides, half of the outages originate from only a select ten states of the US. These high-level figures already suggest that the location and occurrence of outages are considerably skewed, given the fact that the popularity index of searches is normalized for the selected region over all the search queries (that is, not tied to the search volume of that region).

Third, SIFT contextualizes Internet outages by attributing detected spikes with simultaneously trending keywords from the same time and region. The trending keywords can range from ISP company names such as Verizon to natural disasters such as thunderstorms or wildfires. Indeed, our analysis revealed that for most long-lasting outages, the spike trended simultaneously with the <Power outage> topic and natural disasters, suggesting physical failures as the underlying cause of the failure. This observation shows another complementary feature of the user-based approach — users search about the physical world alongside Internet outages, a piece of information that is hard to get by with just network signals.

Although our preliminary work targets Google Trends, the idea can potentially extend into a full-fledged platform. We hope to mine information sources such as other search engine statistics services, Twitter, Telegram, and Reddit. By doing so, the approach has the potential to collect direct/indirect human signals related to poor Internet access, Internet outages, or forms of censorship in a structural, objective, transparent, and quantifiable way. Consequently, the approach can complement or validate the conventional methods and carry the field forward with newfound insights. Eventually, efforts in this direction can help improve the remote detection of underprivileged communities, censored regions, and access providers in violation of availability agreements.

For more information read our paper ‘Is my Internet down?”: Sifting through User-Affecting Outages with Google Trends‘ presented at IMC 2022.

Ege Cem Kirci is a PhD student at ETH Zürich’s Networked Systems Group (NSG) with an interest in Internet measurement, programmable networks, and future Internet architectures.

Martin Vahlensieck and Laurent Vanbever contributed to this work.

The views expressed by the authors of this blog are their own and do not necessarily reflect the views of APNIC. Please note a Code of Conduct applies to this blog.