In our previous post we discussed the changes to the Registration Data Access Protocol (RDAP) architecture to scale to multiple cloud deployments to improve round-trip-times (RTT) by dynamically steering traffic to the Google Cloud Platform (GCP) Kubernetes cluster closest to the request.

Today we’d like to elaborate on how we have implemented these changes, the architecture methodology we used and plans for future improvements.

GitOps

The creation, management and deployment of RDAP for multiple production clusters presented a new challenge of scale. The technologies we were using included Terraform for the infrastructure-as-code and Helm charts to create a template for the RDAP Kubernetes components.

Both Terraform and Helm charts are declarative configurations, in that they define ‘what’ we want, not ‘how’ it should happen (the traditional imperative scripting mechanism).

Given this common nature, and the desire to have a clear versioning of changes to the declared desired state of the infrastructure and environments, we decided to implement a GitOps approach to both aspects.

GitOps is a term coined in 2017 by Weaveworks, as “operations by git pull request“. It has since grown into an open Cloud Native Computing Foundation (CNCF) project with a dedicated working group to promote its use in cloud native application and infrastructure operation and management.

The Five GitOps principles are:

- Declarative configuration: All resources managed through a GitOps process must be completely expressed declaratively.

- Version controlled, immutable storage: Declarative descriptions are stored in a repository that supports immutability, versioning and version history. For example, git.

- Automated delivery: Delivery of the declarative descriptions, from the repository to runtime environment, is fully automated.

- Software agents: Reconcilers maintain system state and apply the resources described in the declarative configuration.

- Closed loop: Actions are performed on divergence between the version controlled declarative configuration and the actual state of the target system.

Terraform

We mentioned previously that our cloud platform is defined as infrastructure-as-code via Terraform. Over the past few months, we have extended this capability to use (and have contributed back to) the Terraform Google Modules project, which aims to provide best practice reference architectures for GCP resources and GKE clusters.

Through the open source project Atlantis we’ve implemented GitOps pull request automation in APNIC, where changes to the declarative infrastructure are automatically ‘terraform planned’ by Atlantis, and provided as a comment on the pull request for other members of the team to review.

Once approved, the pull request can be ‘terraform applied’ directly when the git branch is merged, without requiring a local workstation to be set up (or permissioned) to alter the cloud infrastructure.

FluxCD and Helm Operator

The Kubernetes resources that comprise the RDAP service are created by applying a Helm chart to the cluster, with the environment specifics overlaying a template structure. Traditionally this Helm installation would be carried out by a push deployment through Continuous Integration/Continuous Deployment (CI/CD) frameworks.

This requires the CI/CD tooling to have visibility, credentials and permissions to all the production clusters it needs to administer.

The transitioning to a GitOps model offers us a more secure and scalable option — a pull based deployment, where the clusters themselves use an ‘operator’ to pull the desired state from the git repository containing the declarative configuration for what should be running on the cluster.

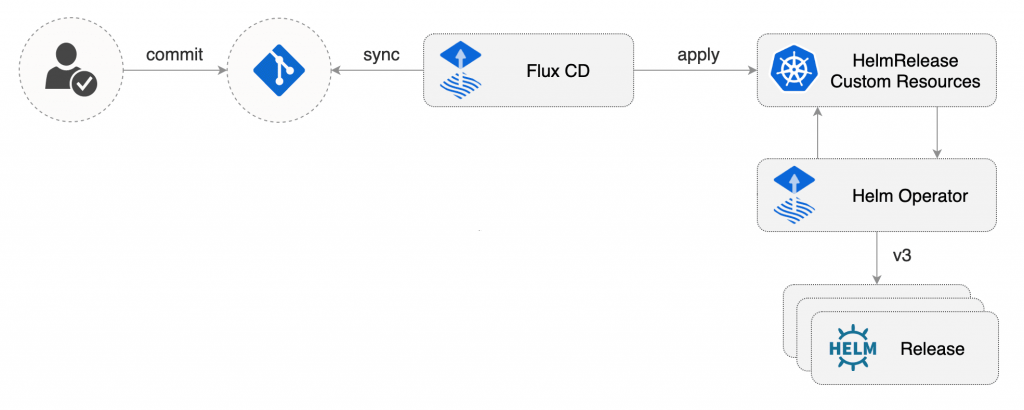

For the GitOps management of the supporting Helm controlled applications (cert-manager, external-dns, nginx-ingress, prometheus, vault-agent and velero) along with the RDAP service, we’re using a combination of FluxCD and the Helm Operator.

These two components allow the pull based synchronization of a git repository of declarative configuration to each cluster, and the definition of a HelmRelease Kubernetes custom resource definition.

The Helm Operator running in each cluster responds to HelmRelease events by installing, updating or removing Helm releases as required.

GitOps allowed the rapid spin up of the additional Singapore and Virginia GKE clusters into our GCP architecture, and the consistent configuration of the applications running inside the Kubernetes cluster by FluxCD, giving clear git diff history of the changes through time.

What’s next?

Industry adoption of GitOps in cloud platform automation continues to accelerate. The teams behind the most popular operators (FluxCD and ArgoCD) have joined forces to implement a generic GitOps engine core, which will support both operators, and the creation of a standard GitOps toolkit. This enhancement will expose additional (Prometheus) metrics, and support multi-tenancy, ensuring GitOps continues to support projects as they scale.

The views expressed by the authors of this blog are their own and do not necessarily reflect the views of APNIC. Please note a Code of Conduct applies to this blog.