In a world of rapid automation, what role will network engineers have? Are they running the network, or are they running the machines running the network?

Can the networks run themselves someday?

In a deep dive discussion at the APNIC 50 conference, Google’s Global Head of Network Architecture and Engineering, Phillip Grasso, introduced the ways in which Google has responded to its network architecture challenges.

To illustrate this, he borrowed some terminology from developers of self-driving car technology.

“Self-driving is a term we borrowed. Carmakers say ‘we’re building the world’s most experienced driver’. I was being cheeky, and maybe we’re trying to build the world’s most experienced network engineer. That’s the dream; let the network run itself.”

Phillip described three stages in the evolution of these networks.

Networking 1.0

For a basic network, scaling up requires more infrastructure and bigger routers. Arguably, this was a closed system because you had to buy the equipment that was made available to you. There was very little choice and standards did not guarantee interoperability.

Networking 2.0

Networking 2.0 came about with developments like software-defined networking and a greater degree of automation. Networks could scale up and be treated “in bulk” with more fault tolerance. This also involved building fabrics. More data moved into the cloud.

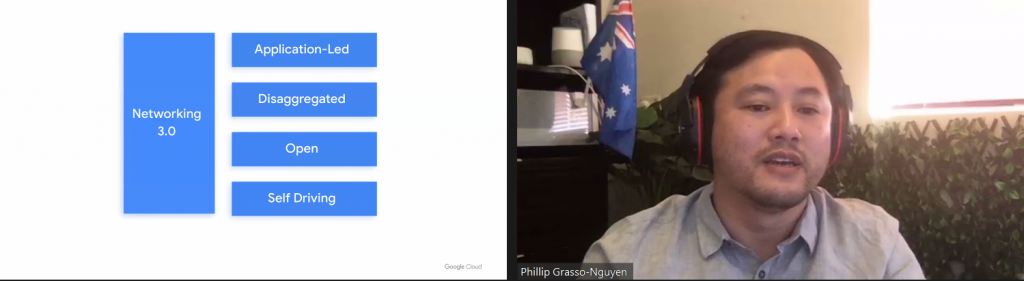

Networking 3.0

In this concept of networking, applications are open and disaggregated. These applications now interact with the network more intelligently. By making all the data associated with the network available to higher-layer security, it is also possible to do better containment and threat monitoring.

As an example of Networking 3.0, Phillip asked: where is your firewall?

“The firewall was previously a physical device, now it’s a virtual device,” said Phillip. “Maybe it’s in the cloud? That’s where disaggregation is; that’s where we should be able to run our implementation in multiple environments, provided through an abstraction model through different layers.

“There’s a whole bunch of systems and open standards existing that the market is moving toward to try to achieve that level of openness. Frankly, we’re not there yet but we’re on the way there.”

Self-driving networks: What does it mean?

One of the core aspects of Networking 3.0 is self-driving networks. This relates to the roles that network engineers take on, and what can be automated.

“In self-driving networks, what we want to be able to do is take humans out so we can solve problems and remove human error. It’s about having the best operating environments and being able to scale out.”

Phillip notes that this doesn’t mean there isn’t a role for people, however. Network engineers would still be needed, but perhaps for different roles.

“Instead of humans running the network, humans run the machines running the network,” said Phillip. “Network engineers need to be managing the machines that run the network automatically for us.”

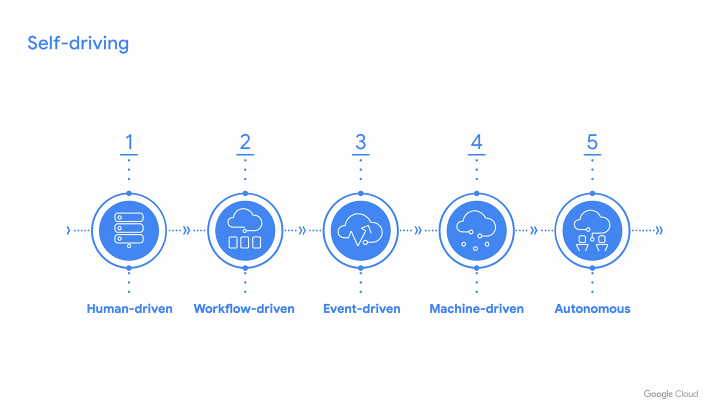

There are five steps involved in the development of these networks, and even Google hasn’t reached level five yet.

The early stages of networks require hands-on input from network engineers for even small tasks and problems. This is a human-driven network. When they have achieved a higher level of abstraction via software, the network operators can focus a bit more on their goals and it’s become a workflow-driven network.

From there, a basic level of automation can respond to particular events with pre-set actions in the system. By this point, it has become an event-driven network.

When the network operator can step back and run the network via machines instead of being as hands-on, it can be classed as machine-driven. When even more complex tasks can be handled by the system it can become autonomous — but this is a vision for the future.

What are the day-to-day differences in a self-driving network?

Let’s take IPv4 addressing as an example. Imagine your network uses IPv4, but it’s running out. You have some IPv4 addresses that you’re not necessarily making the best use of, but it’s too hard to fix this.

Is the problem that you have run out of IPv4 for internal applications, or is it just because you’re not using your address space as efficiently as possible?

In a self-driving network, you would be able to analyse how well they’re being used. You could build a machine learning model to analyse what that right size is, and apply APIs to update that automatically.

But would you trust a machine to resize your address space?

“That’s the kind of problem we’re trying to engage with,” Phillip says. “You need to have a bunch of things in order to do that.”

Abstraction models can also be used to develop more intelligent networks. Software like Multi Abstraction Layer Topology (MALT) can help visualize a network and can be tailored to the suit the requirements of a particular vendor’s hardware or software.

“The important thing about the network model is it’s a software representation of what the network looks like,” said Phillip. “That abstraction allows us to orchestrate configuration on the network.”

“The configuration is only a rendering of the network model. But if we want to change vendor, we can create a software representation for that vendor. You can swap out a vendor with almost no work!”

This allows network operators to have more price competitiveness when choosing vendors, and change their configuration much more quickly.

The APNIC 50 conference website remains available to view the recording of Phillip and other presentations from the event.

The views expressed by the authors of this blog are their own and do not necessarily reflect the views of APNIC. Please note a Code of Conduct applies to this blog.