Over the past few months, I’ve had the opportunity at various network operator meetings to talk about Border Gateway Protocol (BGP) routing security.

I’ve also used these opportunities to highlight a measurement page APNIC Labs has set up that measures the extent to which Route Origin Validation (ROV) is actually ‘protecting’ users. By this I mean APNIC Labs is measuring the extent to which users are prevented from having their traffic misdirected along what we can call ‘bad paths’ in the inter-domain routing environment by virtue of the network operator dropping routes that are classified as ‘invalid’.

Read: A new way to measure Route Origin Validation

As usual, these presentations included an opportunity for questions from the audience. As a presenter, I’ve found this question and answer segment in the presentation the part that is the most fun. It covers topics that I’ve not explained well, things I’ve missed, things I’ve got wrong, and things I hadn’t thought about at all right up to the point when the question was asked!

This is the first post in a series of such questions and my efforts at trying to provide an answer.

Why doesn’t the APNIC Labs’ ROV measurement tool ever show 100%?

Several network service providers clearly perform ingress filtering of route objects where the associated Route Origin Authorization (ROA) shows that the route object is invalid. The networks AS7018 (AT&T Internet) and AS1221 (Telstra) are both good examples, with many more deploying the technology.

Read: Telstra AS1221 strengthens its BGP security with RPKI implementation

The question is: Why doesn’t the measurement tool report 100% for these networks? Why is there ‘leakage’ of users? This means that some users are reaching a site despite the invalid state of the only route object to that site. If the network is dropping invalid routes, then how can this happen?

Several possible explanations come to mind. It could be that there are a small proportion of end-users that use split-horizon VPN tools. While the measurement system reports the user as having an IP address that is originated by a particular network, there might be a VPN tunnel that relocates this user to a different point in the inter-AS topology. However, it’s not a very satisfactory explanation.

There is a different explanation as to why 100% is a largely unobtainable number in this RPKI measurement system. The reason lies in the measurement methodology itself. The issue here lies in the way a control element is implemented, and in the nature of the experiment itself.

We could’ve designed the experiment to use two beacons. One object would be located behind an IP address that has a valid ROA that never changes. A second object would be located behind an IP address that has a ROA that casts the route object as ‘invalid’. Again, this would never change. What we would be counting is the set of users that can retrieve the first object (valid route object) and not the second (invalid route object).

However, there are a couple of issues with this approach. Firstly, we want to minimize the number of web fetches performed by each user. So, one web fetch is better than two or more in this measurement framework. Secondly, this approach would be incapable of looking at the dynamic capabilities of the overall system. How long does it take for a state change to propagate across the entire routing system?

This thinking was behind APNIC Labs’ decision to build a measurement system that used a single beacon that undertook a regular state change. One half of the time the route object is valid, and the other half it’s invalid (we admit to no Schrodinger’s cat-like uncertainty here — in our case that cat is alive or dead, and there is no in-between!). We designed a single measurement that changed state: one half of the time it was a ‘control’ state where the route was RPKI-valid, and the other half of the time was RPKI invalid.

This might lead one to believe that an ROV-filtering network would be able to track this state changing, installing a route when the RPKI ROA state indicated a valid route object, and withdrawing the route when the RPKI ROA state indicated an invalid route object. This is only partially true, in that there is an appreciable time lag between a state change in the RPKI repository that publishes the new ROA and the state of the local BGP FIB that processes packet forwarding.

Once the RPKI repository has published a new Certificate Revocation List (CRL) that revokes the old End Entity certificate and publishes a new End Entity certificate and a new ROA signed by this new certificate, then several processes come into play.

The first is the delay between the RPKI machinery and the publication point. For hosted solutions, this may involve some scheduling and the delays may be appreciable. In our case, all this occurs on a single system and there is no scheduling delay. This is still not instantaneous, but an inspection of the publication point logs indicates that the updated ROA, CRL and manifest files are generated and published all within one second, so the delays at the RPKI publication point, in this case, are small.

Now the remote RPKI clients need to sweep across the repository and discover that there has been a state change. This is the major element of delay. How often should the RPKI client system sweep across all the RPKI repository publication points to see if anything has changed? Don’t forget that there is no update signalling going on in RPKI publication (yes, that’s another question as to why not, and the answer is that publishers don’t know who the clients will be in advance. This is not a closed system.)

Each client needs to determine its ‘sweep frequency’. Some client software use a two-minute sweep interval, some use 10 minutes, some use one hour. But it is a little more involved than this. The sweep process may start every two minutes, but it takes a finite amount of time to complete.

Some RPKI publication points are slow to access. This means that a ‘sweep’ through all RPKI repository publication points might take a few seconds, or a few minutes, or even many minutes. How frequently your RPKI client system sweeps through the distributed RPKI repository system to identify changes determines the lag in the system.

Should we perform this sweep in parallel to prevent head of queue blocking causing in the sweep intervals? Maybe, but there is a limit to the number of parallel processes that any system can support, and no matter what number you pick there is still the situation that a slow publication point will hold up a client.

The result? It takes some time for your system to recognize that my system has revoked the previously valid ROA and issued a new ROA that invalidates the prefix announcement (and vice versa). On average it takes 30 minutes for everyone to see the valid to invalid transition and five minutes on the invalid to valid transition.

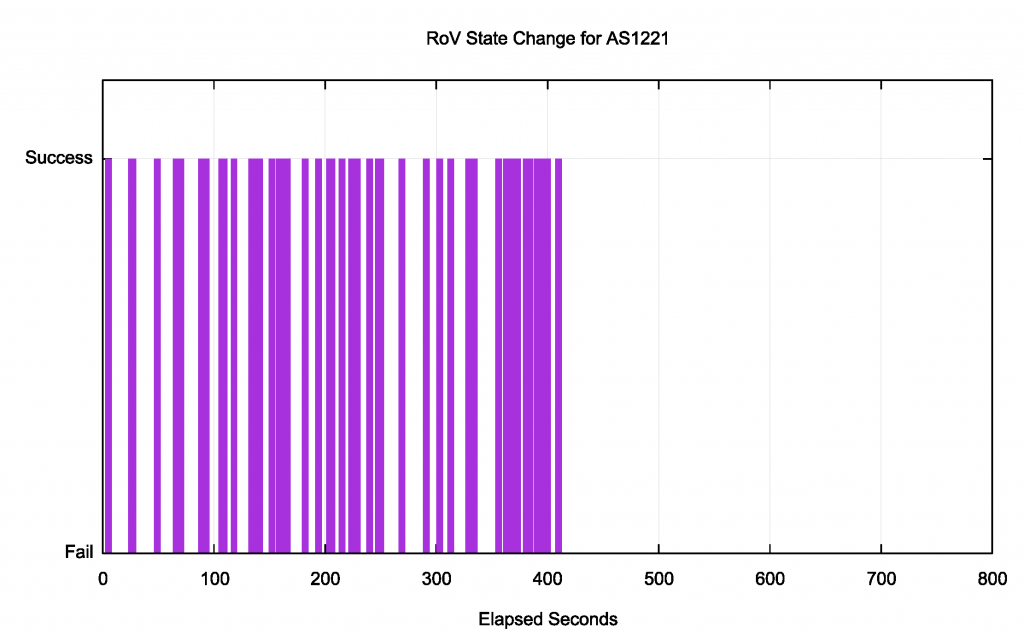

In every seven-day window, there are six ‘shoulder’ periods where there is a time lag between the RPKI repository state change and your routing state. The errant users who are stopping any network from getting to 100% lurk in these shoulder periods. Here’s one snapshot of one such transition period for one network (Figure 1).

In this case, the routing transition took effect somewhere between 410 and 427 seconds after the change in the source RPKI repository. Those 51 sample points out of the one-hour sample count of 319 individual experiments are essentially the ‘errors’ on the fringe of this measurement

The time lags are likely between the repository state and a network’s routing state, that is, the factor that prevents the network recording a 100% measurement. However, it’s not really the network’s fault.

The RPKI RFC documents are long and detailed (I know, as I authored a lot of them!) but they do not talk about timers and the expected responsiveness of the system. So different implementors made different choices. Some setups are slow to react, others are faster. A clear standard would’ve been helpful here. But we would still have this problem.

The underlying issue is the parallel RPKI credential ‘just in case’ distribution system.

How fast, or how responsive, should the RPKI distribution system be in theory? As fast as BGP propagation is the ‘theoretical’ best answer. The compromises and design trade-offs that were used to construct this system all imposed additional time penalties and made the system less responsive to changes.

What’s egress filtering and why should I do it?

There is this theory that in this yet-to-happen nirvana when every network does ROV dropping that when two networks become BGP peers that peering session needs to have only one point of origin validation filtering. Applying a filter on both export and import seems like just having double the amount of fun any network should have.

What about the imperfect world of partial adoption?

Earlier this year, one largish network enabled ROV filtering over incoming BGP updates, but then was observed leaking routes with its own AS as the origin AS. Some of these leaked prefixes had ROAs, and ROV would’ve classified these leaked routes as invalid. RFC 8893 on ‘Origin Validation for BGP Export’ specifies that implementations must support ‘egress filtering’ in additional to ‘ingress filtering’. It uses a normative MUST to make the point but fails to provide any further discussion as to whether network operators should enable this function.

If we come back to the leaked route incidents, would egress filtering have prevented this leak? Well not quite. It would’ve stopped leaking those prefixes where there was an extant ROA. Other prefixes, and in a world of partial adoption there are probably many other prefixes, would still have leaked.

In general, it’s true that egress filtering stops a network from ‘telling evil’ in as much as ingress filtering stops a network from ‘hearing evil’ and the combination of ingress and egress filtering is a pragmatic measure when the network is unaware of the origin validation capabilities of a BGP peer. But it’s a senseless duplication of effort when both networks who directly peer are performing origin validation.

This occurs because RPKI validation is performed as an overlay to BGP rather than as an integral part of the protocol. Neither BGP speaker is aware when the other is applying an ROV filter to the updates they are announcing or hearing. This structural separation of RPKI from BGP leads to the duplication of effort.

But perhaps there is more to this than simply ingress and egress filtering. It was evident that in leaking these routes the network was announcing more than it had intended. But other networks had no way of knowing that. Now in the magical nirvana of universal adoption of route origination, all these forms of route leak would be evident to all because of the origination mismatch. But that nirvana may be some time off. IPv6 is taking more than two decades. DNSSEC is looking pretty similar. Gone are the days when we could contemplate an uplift of the entire routing infrastructure in a couple of months.

So, pragmatically, we should ask ourselves a slightly different question: “In a world of partial adoption how can we take further steps to mitigate route leaks?” Here some of the earlier efforts in Routing Policy Specification Language (RPSL) might be useful.

ROAs describe permission from a prefix holder for an AS to originate a route for this prefix into the routing system. But that granted permission does not mean that the AS in question has accepted this permission. Indeed, there is no way of knowing in the RPKI the AS’s view of this permission. What is the set of prefixes that the AS intended to originate?

If this information was available, signed by the originating AS of course, then any other network could distinguish between an inadvertent route leak and deliberate intention by passing all the route objects that they see as originating from this AS through such a filter.

Are we ever going to secure the AS path?

No! Really?

With path protection, the entire RPKI structure is still ineffectual as a means of preventing hostile efforts to subvert the routing system. Any would-be hijacker can generate a fake route and others will accept it as long as an authorized AS is used as an originating AS. And poor use of the MaxLength parameter in ROAs and excessive AS prepending in AS paths make route hijacking incredibly simple. If RPKI ROV is a routing protection mechanism, then it’s little different to wearing an impregnable defensive shield made of wet lettuce leaves!

So let’s secure the AS Path and plug the gap! Easier said than done, unfortunately.

The BGPSEC models of AS Path protection is borrowed from the earlier s-BGP model. The idea is that each update that is passed to an eBGP neighbor is signed by the router using the private key of the AS in RPKI. But what is signed is quite particularly specified: It’s the prefix, the AS path and the AS of the intended recipient of the update. Well, actually, it’s a bit more than this. It is signed over the signed AS path that this network received.

As an update is propagated across the inter-AS space the signing level deepens. This signing-over-signing makes any tampering with the AS path incredibly challenging. Any rogue router in the path cannot alter the AS path that they’ve received, as they have no knowledge of the private keys of these ASes, and of course, it cannot sign for future ASes that may receive the route object. And if it does not sign this AS path as it propagates it then the AS path can no longer be validated. So, in theory, it’s trapped and can’t fake the AS Path.

All good, but there are quite a few practical problems with this approach:

- It’s incredibly expensive to both calculate and validate these signatures. Even if you suppose that we can use high-speed crypto hardware there is still the challenge that we’d like to be able to complete a full BGP session reset in no more than a couple of seconds. That’s of the order of millions of crypto operations per second. That’s hard to achieve on your everyday middle of the road cheap router. Impossible springs to mind.

- Getting private keys to routers is a nightmare!

- Routing policy is not addressed. Protocol-wise it’s quite ok for a multi-homed customer to re-advertise provider-learned route objects to another provider. Policy-wise it’s a real problem and can be as bad, if not worse, than any other form of route leak.

- Partial deployment is integral to this model. AS Path protection can only be afforded to internally connected ‘islands’ and only to prefixes originated within these ‘islands’ and the system cannot bridge gaps.

- High cost. Low benefit.

It was never going anywhere useful.

An old idea first aired in soBGP 20 years ago was dusted off and given a second airing. Here an AS declares its AS neighbors in a signed attestation. The assumption here is that each AS makes an ‘all or nothing’ decision. Either an AS signs an attestation listing for all of its AS neighbors or not. It can’t just list a few.

The implication is that if a party wants to manipulate an AS Path, then if it uses one of the ASes that maintains one of these AS neighbor attestations then it must also add a neighbor AS into the synthesized path (the all-or-none rule). The benefit to an AS of maintaining this neighbourhood attestation is that its more challenging to include this AS into a synthetic path, as then an attacker also has to include a listed neighbor AS. As more ASes create such neighbor attestations, they suck in their neighbor ASes. The result is that it is still possible to lie in the AS Path, but the scope for such lies is severely curtailed.

Such AS neighbor attestations can be handled in a manner analogous to ROAs. They are generated and published by the AS owner. Clients can collect and validate these attestations in the same way that they collect and validate ROAs. And the result of a set of permitted AS adjacencies in AS Paths can be passed to a router as an externally managed filter set, much the same as ROAs.

It’s close to fully signing an AS Path but does not require universal adoption and does not extract a heavy cost on the router. This course of action looks a whole lot more promising, but it still has that policy hole.

And this is where Autonomous System Provider Authorization (ASPA) comes in. It’s still a draft in the SIDROPS working group, but the idea is simple: A customer AS signs an attestation that lists all its provider and peer ASes (all or none, of course). Now there is the concept of policy directionality and a BGP speaker can apply the ‘valley free’ principle to AS paths. It’s not comprehensive AS path protection, but it further limits the space from which synthetic AS paths can be generated and it’s still useful even in the scenario of partial deployment.

But good as it sounds, I’m still not optimistic about its chances for deployment. Not for any technical reason, but for the observation that in the engineering world we appear to have a limited attention span, and we seem to get just one chance to gain attention and access to resources to make the idea work.

ROV is what we came up with and all we came up with at the time. Perhaps, cynically, I suspect that it may be what we are stuck with for the foreseeable future.

Any more questions?

I hope this post and ultimate series has shed some light on the design trade-offs that were behind RPKI work so far, and point to some directions for further efforts that would shift the needle with some tangible improvements in the routing space.

As always, I welcome your questions in the comments below, which I may add to and address in this series in time.

The views expressed by the authors of this blog are their own and do not necessarily reflect the views of APNIC. Please note a Code of Conduct applies to this blog.