It’s pretty clear that the Internet Protocol has some problems.

One of the major issues here is the ossification of the network due to the constraining actions of various forms of active middleware. The original idea was that the Internet was built upon an end-to-end transport protocol (TCP and UDP) layered above a simple datagram Internet Protocol. The network’s active switching elements would only look at the information contained in the IP packet header, and the contents if the ‘inner’ transport packet here was purely a matter for the two communicating endpoints.

But that was then, and today is different. Middleware boxes that peer inside each and every packet are pervasively deployed. This has reached the point where it’s now necessary to think of TCP and UDP as network protocols rather than host-to-host protocols.

Middleware has its own set of expectations of ‘acceptable’ packet formats and appears to silently drop errant packets. But what is ‘errant’ can be a very narrow set of constraints that impose even greater conditions on protocol actions than the standard specifications would normally permit.

One of the more pressing and persistent problems today is the treatment of fragmented packets.

We are seeing a very large number of end-to-end paths that no longer support the transmission of fragmented IP datagrams. This count of damaged paths appears to be getting larger, not smaller. As with many network level issues, IPv6 appears to make things worse, not better, and the IPv6 fragmented packet drop rates are substantially greater than comparable IPv4 figures.

There are two middleware boxes that appear to play a major role here: Firewalls and Network Address Translation (NAT) units. In both cases, these units look inside the transport header to collect the port addresses.

The transport header is used by firewalls to associate a packet with an application, and this is then used to determine whether to accept or discard the packet. NATs use the port addresses to increase the address utilization efficiency, by sharing a common IP address, but differentiating between distinct streams by the use of different port addresses.

The issue with IP packet fragmentation is compounded by the use of an extension header to control fragmentation reassembly, inserted between the IP and transport headers. Middleware, expecting to see a transport header at a fixed offset within an IPv6 packet, is going to have to perform additional work to unravel the chain of extension headers. Some IPv6 devices appear to have been programmed to take a faster path and just drop the packet!

We either can’t or don’t want to clean up this middleware mess so it’s left to the applications to make use of transport protocols that steer around these network obstacles.

In theory, TCP should be able to avoid this problem. Through conservative choices of the session maximum segment size (MSS), reaction to ICMP Packet Too Big messages (when they are passed through), and as a last resort use of TCP Path MTU Discovery (RFC 4821), it is possible for TCP sessions to avoid wedging on size-related packet drop issues.

But what about UDP? And what about the major client application of UDP, the DNS? Here there is no simple answer.

The original specification of the DNS adopted a very conservative position with respect to UDP packet sizes. Only small responses were passed using UDP, and if the response was larger than 512 bytes then the server was meant to truncate the answer and set a flag to show that the response has been truncated.

Messages carried by UDP are restricted to 512 bytes (not counting the IP or UDP headers). Longer messages are truncated and the TC bit is set in the header (Section 4.2.1, RFC 1035).

The DNS client is supposed to interpret this truncated response as a signal to re-query using TCP, and thus avoid the large size UDP packet issues. In any case, this treatment of large DNS responses was a largely esoteric issue right up until the introduction of DNSSEC. While it was possible to trigger large responses, the vast majority of DNS queries elicited responses lower than 512 bytes in size.

The introduction of DNSSEC saw the use of larger responses because of the attached signatures, and the use of this 512-byte maximum UDP response size was seen as an arbitrary limitation that imposed needless delay and inefficiencies on the DNS.

An optional field was added to DNS queries; the client’s UDP buffer size as part of the EDNS(0) specification. A client could signal its willingness to receive larger packets over UDP by specifying this buffer size.

EDNS(0) specifies a way to advertise additional features such as larger response size capability, which is intended to help avoid truncated UDP responses, and in turn, causes retry over TCP. It, therefore, provides support for transporting these larger packet sizes without needing to resort to TCP for transport (Section 4.3, RFC 6891).

Interestingly, the default UDP buffer size is not commonly set at 1,280 or 1,500 — both of which would both be relatively conservative settings — but at the size proposed in the RFC, namely 4,096. We’ve managed to swing the pendulum all the way over and introduce a new default setting that signals that it’s quite acceptable to send large fragmented UDP responses to DNS queries.

A good compromise may be the use of an EDNS maximum payload size of 4,096 octets as a starting point (Section 6.2.5, RFC 6891).

As we’ve already noted, the delivery of large fragmented UDP packets is not very reliable over the Internet. What happens when the fragmented packets are silently dropped? From the querier’s perspective, a lost large UDP response has no residual signal. All the querier experiences is a timeout waiting for an unforthcoming response. So how do DNS resolvers cope with this?

RFC 6891 provides an answer:

A requestor MAY choose to implement a fallback to smaller advertised sizes to work around firewall or other network limitations. A requestor SHOULD choose to use a fallback mechanism that begins with a large size, such as 4,096. If that fails, a fallback around the range of 1,280—1,410 bytes SHOULD be tried, as it has a reasonable chance to fit within a single Ethernet frame. Failing that, a requestor MAY choose a 512-byte packet, which with large answers may cause a TCP retry (Section 6.2.5, RFC 6891).

This searching for a size appears to simply take more time in most cases, and it appears that the generic algorithm used by DNS resolvers is:

- If the client does not receive a response within the locally defined timeout interval then it should resend the query. These timeout intervals are variously set at 200ms, 370ms, 800ms and 1 second, depending on the resolver implementation.

- Further timeouts should also trigger queries to any other potential DNS servers.

- If these queries also experience a timeout then the client should try the queries using an EDNS(0) UDP buffer size of setting 512 bytes.

- If the client receives a truncated response, then it should switch over to try TCP.

Even that truncated search for a response involves a lot of time and potentially a lot of packets. If large response packets were uncommon in the DNS, or fragmented packet drop was incredibly rare, then maybe this overhead would be acceptable. However, that’s not the case.

Large responses are not uncommon when using DNSSEC. For example, a query for the signed keys of the .org domain elicits a 1,625 octet response. Inevitably that’s a response that is formatted as a fragmented UDP response if the query contains a large EDNS(0) UDP buffer size.

Fragmented packet drop is also depressingly common. Earlier work in September 2017 showed a failure rate of 38% when attempting to deliver fragmented IPv6 UDP packets via the DNS recursive resolvers.

As previously noted when reporting on this alarmingly high drop rate:

“…one conclusion looks starkly clear to me from these results. We can’t just assume that the DNS as we know it today will just work in an all-IPv6 future Internet. We must make some changes in some parts of the protocol design to get around this current widespread problem of IPv6 Extension Header packet loss in the DNS, assuming that we want to have a DNS at all in this all-IPv6 future Internet.”

Read: Dealing with IPv6 fragmentation in the DNS

So how can we get around this drop rate for large DNS responses?

We could move the DNS away from UDP and use TCP instead. That move would certainly make a number of functions a lot easier, including encrypting DNS traffic on the wire as a means of assisting with aspects of personal privacy online as well as accommodating large DNS responses.

However, the downside is that TCP imposes a far greater load overhead on servers, and while it is possible to conceive of an all-TCP DNS environment, it is more challenging to understand the economics of such a move and to understand, in particular, how name publishers and name consumers will share the costs of a more expensive name resolution environment.

If we want to continue to UDP where it’s feasible, and continue to use TCP only as the ‘Plan B’ protocol for name resolution, then can we improve the handling of large response in UDP? Specifically, can we make this hybrid approach — of using UDP when we can, and TCP only when we must — faster and more robust?

The ATR proposal

An approach to address this challenge is that of ‘Additional Truncated Response’ (documented as an Internet Draft: draft-song-atr-large-resp-00, September 2017, by Linjian (Davey) Song of the Beijing Internet Institute).

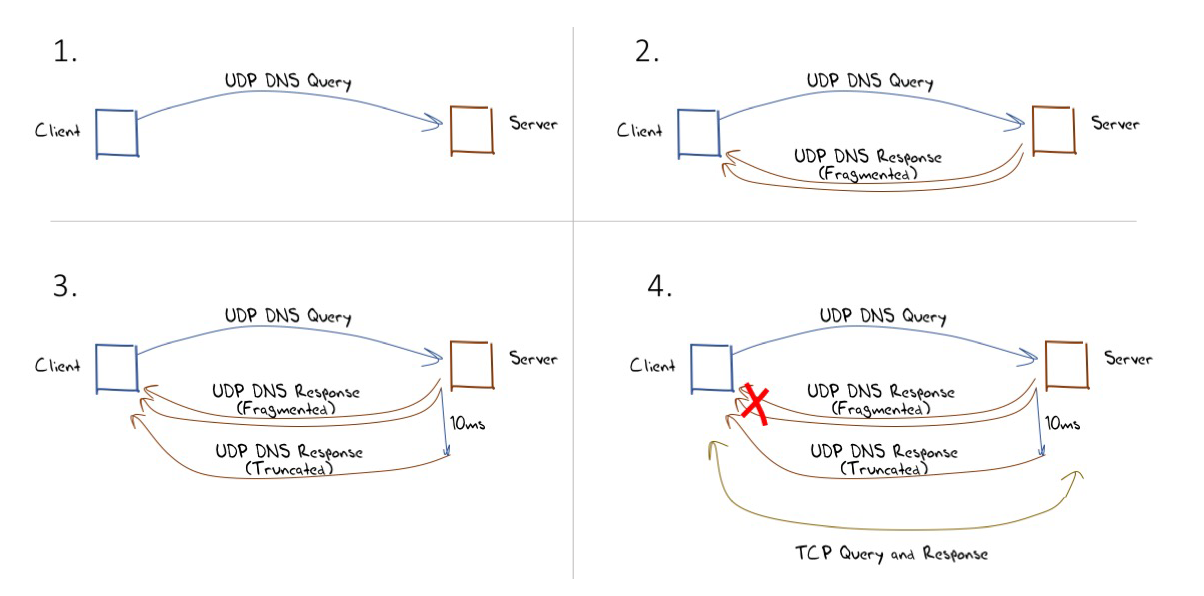

The approach described in this draft is simple: If a DNS server provides a response that entails sending fragmented UDP packets, then the server should wait for a 10ms period and also back the original query as a truncated response. If the client receives and reassembles the fragmented UDP response, then the ensuing truncated response will be ignored by the client’s DNS resolver as its outstanding query has already been answered. If the fragmented UDP response is dropped by the network, then the truncated response will be received (as it is far smaller), and reception of this truncated response will trigger the client to switch immediately to re-query using TCP. This behaviour is illustrated in Figure 1.

How well does ATR actually work?

We’ve constructed an experiment to test this proposed mechanism.

The approach used here was that of ‘glueless delegation’ (described by Florian Maury, ANSSI at the May 2015 DNS OARC Workshop).

When the authoritative server for the ‘parent’ zone is queried for a name that is defined in a delegated ‘child’ zone, the parent zone server will respond with the name servers of the delegated child zone. But, contrary to conventional server behaviour, the parent zone server will not provide the IP addresses of these child zone name servers.

The recursive resolver that is attempting to complete the name resolution task now must suspend this original resolution task and resolve this name server name into an IP address. Only when it has completed this task will it then be able to revert to the original task and pass a query to the child zone servers.

What this means is that we can use the DNS itself to test the capabilities of those resolvers that query authoritative servers. If the response that provides the IP address of the child zone name servers is so constructed to exhibit the behaviour being measured, then we know if the resolver was able to receive the response if it subsequently queries the child zone name servers.

Using the glueless delegation technique we constructed six tests:

- The first pair of tests used ATR over IPv4 and IPv6. In this case, we constructed a fragmented UDP response by appending a NULL Resource Record (RR) into the response as an additional record, generating a response of 1,600 octets. We configured the server to deliberately ignore the offered UDP buffer size (if any) and generated this UDP fragmented response in all cases. The server then queued up a truncated response that was fired off 10ms after the original response.

- The second pair of tests used just the large packet response in both IPv4 and IPv6. In this case, the server was configured to send a large fragmented UDP response in all cases, and never generated a truncated response.

- The third pair of tests was the truncated UDP response in IPv4 and IPv6. Irrespective of the offered UDP buffer size the server echoed the query with an empty response part and the truncated flag set.

This allowed us to measure the extent at which large fragmented UDP responses fail on the paths between our authoritative name servers and the resolvers that pose queries to these servers.

It also allowed us to measure the extent at which these resolvers are capable of using TCP when given a truncated response.

We can also measure the extent at which ATR uses the trailing truncated response.

We performed these tests over 55 million endpoints, using an online ad distribution network to deliver the test script across the Internet.

Table 1 shows the results of this experiment looking at the behaviour of each IP resolver.

| Protocol | Visible Resolvers | Fail Large UDP | Fail TCP | Fail ATR |

| IPv4 | 113,087 | 40% | 21% | 29% |

| IPv6 | 20,878 | 50% | 45% | 45% |

Some 40% of the IPv4 resolvers failed to receive the large fragmented UDP response, which is a disturbingly high number. Perhaps even more disturbing is the observed IPv6 failure rate, which is an astounding 50% of these visible resolvers when a server is sending the resolver a fragmented UDP response.

The TCP failure numbers are not quite as large, but again they are surprisingly high. Some 21% of the IPv4 resolvers were incapable of completing the resolution task if they were forced to use TCP. The IPv6 number is more than double, with 45% of the IPv6 resolvers running into problems when attempting to use TCP.

The ATR approach was seen to assist resolvers, and in IPv4 the ATR loss rate was 29%, indicating that a little over 10% of resolvers that were incapable of receiving a fragmented UDP response were able to switch over the TCP and complete the task. The IPv6 ATR failure rate was 45%, a 5% improvement over the underlying fragmented UDP loss rate.

When looking at the DNS, counting the behaviour of resolvers should not be used to infer the impact on users. In the DNS the most heavily used 10,000 resolvers by IP address are used by more than 90% of users. If we want to understand the impact of ATR on DNS resolution behaviours, as experienced by users, then we need to look at this measurement from the user perspective.

For this user perspective measurement, we count a ‘success’ if any resolver invoked by the user can complete the DNS resolution process, and a ‘failure’ otherwise. The user perspective results are shown in Table 2.

| Protocol | Fail Large UDP | Fail TCP | Fail ATR |

| IPv4 | 13% | 4% | 4% |

| IPv6 | 21% | 8% | 6% |

These results indicate that in some 9% of IPv4 cases the use of ATR by the server will improve the speed of resolution of a fragmented UDP response by signalling to the client an immediate switch to TCP to perform a re-query. The IPv6 behaviour would improve the resolution times in 15% of cases.

The residual ATR failure rates appear to be those cases where the DNS resolver lies behind a configuration that discards both DNS responses using fragmented UDP and DNS responses using TCP.

Reasons to use ATR?

The case for ATR certainly looks attractive if the objective is to improve the speed of DNS resolution when passing large DNS responses.

In the majority of cases (some 87% in IPv4, and 79% in IPv6) the additional truncated response is not needed, and the fragmented UDP response will be successfully processed by the client. In those cases, the trailing truncated UDP response is not processed by the client, as the previously processed fragmented UDP response has cleared the outstanding request queue entry for this query.

In cases where fragmented UDP responses are being filtered or blocked, then the ATR approach will eliminate the client performing a number of timeouts and re-query cycles before they query using an effective ENDS(0) UDP buffer size of 512 and generating the truncated response that will trigger TCP. This will eliminate a number of query packets and reduce the resolution time.

ATR is incrementally deployable. ATR does not require any special functionality on the part of the DNS client, as it is exclusively a server-side function. And the decision for a server to use ATR can be made independently of the actions of any other server. It’s each server’s decision whether or not to use ATR.

Reasons not to use ATR?

It is not all positive news with ATR — there are some negative factors to consider when deciding to use it.

ATR adds a further UDP packet to a large fragmented DNS response. This assists an attacker using a DNS DDoS attack vector, as the same initial query stream will generate more packets and a larger byte count when the ATR packet is added to the large DNS responses.

The choice of the delay timer is critical. Inspection middleware often reassembled packet fragments in order to ensure that the middleware operation is applied to all packets in the fragmented IP packet sequence. At the destination host, the fragmented packet is reassembled at the IP level before being passed to the UDP packet handler. This implies that the unfragmented ATR packet can ‘overtake’ the fragmented response and generate unnecessary TCP activity.

To prevent this, the delay needs to be long enough to take into account this potential additional delay in the handling of fragmented packets. It also needs to take into account some normal level of packet jitter in the network. But too long a delay would allow for the client to timeout and re-query, negating the incremental benefit of ATR. That implies that the setting of the delay should be ‘long enough, but not too long’.

ATR assessment

The DNS is a vitally important component of the Internet, and a more robust DNS that can help provide further security for users is a good thing. However, there are no free rides here, and among the costs of adding DNSSEC to the DNS is the issue of inflating the size of DNS responses. And larger DNS responses make the DNS slower and less reliable.

ATR does not completely fix the large response issue. If a resolver cannot receive fragmented UDP responses and cannot use TCP to perform DNS queries, then ATR is not going to help.

But where there are issues with IP fragment filtering, ATR can make the inevitable shift of the query to TCP a lot faster than it is today. But it does so at a cost of additional packets and additional DNS functionality.

If a faster DNS service is your highest priority, then ATR is worth considering.

The views expressed by the authors of this blog are their own and do not necessarily reflect the views of APNIC. Please note a Code of Conduct applies to this blog.

I have had a few questions via Twitter which I’ve answered below (as somethings can’t be answered with a single Tweet):

Q/ If ATR is going to be used, might it be appropriate for the authoritative server to be more willing to use it if the client is able to use DNS cookies to confirm that actually can receive traffic sent to its claimed source address?

Firstly, ATR applies to BOTH recursive servers and authoritative servers – i.e. it can be used anywhere the DNS sends a response.

Secondly, I think the issue of cookies is kinda slightly off the mark – after all the truncated response is only there to trigger a TCP exchange, if it is needed, and the TCP exchange effectively requires that the other end is genuine.

So I tend to disagree and I am of the view that DNS cookies would not really add to the effectiveness of ATR. It adds to the overhead without necessarily making the resolution more efficient.

Q/ If we had a way for a DNS response to be sent as several unfragmented UDP packets instead of a single fragmented UDP packet, would that work better?

A Venerable DNS topic. The idea being that instead of using IP level fragmentation and reassembly the DNS application handles fragmentation and reassembly itself. Proponents of DNS session level argue it is better – others disagree. The major practical stumbling block these days to such an approach is middleware – Many middleware boxes expect to see a properly formatted DNS query when they inspect a UDP packet being sent from port 53. If there is some application level session protocol going on then there is a high likelihood of the packet being dropped. It is probably (though not measured as far as I am aware) that the drop rate today for such application level framed packets would be actually higher than fragmented packets!

So I am unsure that such an approach would work ‘better’ than today’s behaviour.

Q/ If resolvers are allowed to just use TCP first now, might it be appropriate for resolvers to determine at startup whether they’re behind a middlebox that breaks UDP and if so be more aggressive about trying TCP sooner?

Resolvers can already initiate a TCP session without receiving a truncated response first – its all stateless. They don’t becuase a) it takes longer and b) it puts strain on the server

Q/ Do we have good data on the trade offs between backbones just giving up on making UDP work during DDoS attacks vs authoritative servers needing more processing resources to handle TCP?

Another large topic. We have a lot of experience in defending against large scale UDP DNS attacks. Shifting all DNS queries to TCP would place the cost burden on all DNS servers while the UDP defence, while by no means always effective, is highly cost efficient.

Q/ To the extent that the 10,000 most popular resolvers used by 90% of the users end up not following the standards, is it possible to work with the operators to get them fixed?

Possibly, but as the DNS is a highly distributed system getting such a message out and getting infrastructure upgraded is messy and difficult.

• Does a carefully optimized authoritative server handling DNS over TCP in conjunction with TCP Fast Open need significantly more processing resources than DNS over UDP for responses under 1400 bytes (with standard Ethernet MTU)?

(well the standard Ethernet MTU is 1500, not 1400.)

Could TCP be more ‘efficient’? Yes, it could. But what would be the effort to move away from the current status quo? The larger the Internet gets the more we tend to rely on small incremental changes deployed in a piecemeal fashion.

ATR is a small hack on servers when they serve large responses. Large responses are relatively rare, so its hard to justify changing the entirety of the DNS to use TCP just to suit the demands of large packets. If anything pushes the DNS over into TCP, its likely that it will be privacy and security in response to widespread DNS interception, not the handling of large responses.

Looking at the Wikipedia article for TCP Fast Open makes me think it is not as aggressive as I had assumed it would be. Obviously we’d like a resolver to be able to send a single packet to an authoritative server with a DNS query and get a single packet back with the DNS answer (if it’s small enough) so that the whole thing only takes a single round trip (multiple packets sent nearly simultaneously work fine too for bigger answers). If TCP Fast Open doesn’t allow the initial packet to include a data payload, why doesn’t it do that? And if TCP Fast Open were enhanced to the point where a single packet from the client to the server could open the connection, include the query, and indicate that the client is willing to have the connection closed as soon as the answer has been sent, would that sort of TCP processing put any more strain on the server than UDP processing does?

When I find myself needing to configure middleboxes to do NAT because I don’t have a globally routable v4 address for every device, it certainly is my intention that the middlebox shouldn’t be looking inside the UDP payload for packets that happen to involve port 53, but I think I’ve had an experience with a Vendor J product looking inside the payload of port 53 packets when I’d been under the impression that it wasn’t going to be doing so.

My thought regarding cookies is that if an authoritative server receives a cookie which provides proof that the requester can read packets destined to the claimed source address, then it becomes possible to be reasonably confident that the authoritative server can send a larger response than the request without having to worry that it is participating in a DDoS attack, and so the full ATR response should be relatively safe when the cookie is present in that fashion; without the cookie, the authoritative server might want to be more careful about how many bytes it is sending. On the other hand, I suspect cookies are not widely deployed yet, and it’s possible that sites properly deploying cookie support may tend to have less broken UDP paths and thus might be less likely to benefit from ATR.

Do you have data on what percentage of the 10,000 most popular recursive resolvers sometimes or always run into problems with large UDP packets? What about the 1,000 or 100 most popular? How easy or hard is it to identify technical contacts for the 100 or 1000 most popular (or more interestingly, the broken subset thereof)?

I’ve heard a rumor that the distributed code execution platform that you use to run code on random people’s client devices has an optional feature that some of their customers use which displays messages to the humans who use those client devices, and I was also under the impression that sometimes those humans respond to those messages effectively enough that the transactions motivate folks to run the client device code execution platform in the first place. Maybe you could see if messages asking people to help APNIC get in touch with the technical people who run the network could get results.

I was thinking that if a DDoS attack causes a backbone to drop nearly all UDP packets, having DNS automatically fall back to TCP might also be useful.

With the 1400 bytes, I was thinking of a UDP payload, excluding the IP and UDP headers, that happens to be 1400 bytes; I’m not sure exactly how big the IP and UDP headers are, but if the DNS answer itself is 1500 bytes and then you add IP and UDP headers, the whole thing will not fit into a packet which is subject to the standard 1500 byte Ethernet MTU. Indeed, you quoted RFC 6891 mentioning “a fallback around the range of 1,280—1,410 bytes” to fit within a standard Ethernet frame.