At connection start, a Transmission Control Protocol (TCP) sender wanders in uncharted waters: it has no information about the current network conditions and the available capacity.

To quickly test the network capacity and thus avoid congesting the network, TCP employs slow start during which TCP exponentially increases the congestion window, governing the allowed amount of bytes in flight, until a congestion event is detected, for example, the loss of bytes.

The Initial Congestion Window (IW) defines the amount of data sent directly at the start of this exponential increase, which is especially important when the connections are short lived, for example, classic web transfers. Thus, operators have a powerful but dangerous parameter to tweak. Too large values can cause severe losses at a bottleneck as the IW overshoots the capacity, however too small values cause inflated latency.

For this reason IETF standardization is very conservative with standardizing increased IWs. Over the last three decades, the IW was standardized to 1, 2, 3, 4 and currently, driven by Google’s effort in 2010, suggests 10 segments worth of data.

IW10 is also the default in the Linux kernel since version 2.6.39 from 2011. Yet, it can typically be freely configured with the help of the ip route in Linux. There are trends to tailor it for different networks from past observations as discussed in a recent ICDCS paper Riptide: Jump-Starting Back-Office Connections in Cloud Systems. We have also started to see these customizations with CDN providers.

Motivated by customization and its importance during the startup of a connection, we sought to shed light on IW usage in the Internet and scan the entire IPv4 address space for the initial window configurations — the results of which we presented Large-Scale Scanning of TCP’s Initial Window at SIGCOMM IMC 2017. As the IW is not an advertised value in the TCP header and only kept locally to each endpoint, we designed a probing scheme that tests the IW configurations.

How we measured IWs

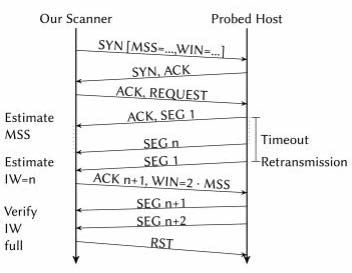

Figure 1 provides a schematic of the process which we used to probe for IWs.

After establishing a TCP connection in which we announce a small segment size (remember, the IW is typically defined in multiples of the segment size), we send a request to the target. In our work, we targeted HTTP and HTTPs servers, so either a HTTP request of the ‘/’ page or a TLS handshake. The server will then produce a reply and send it to us.

However, at the start the server is not allowed to transmit more than its IW worth of data.

In contrast to a regular TCP, we do not acknowledge the receipt of the segments, this causes the target to not increase the congestion window.

As TCP is reliable it will eventually retransmit the first segment, showing us that it cannot transmit more data until we acknowledge the receipt, so the data that we received is exactly the initial congestion window which we wanted to measure. Unfortunately, it could have also happened that the reply was simply not large enough to fill the initial window. To test for this, we start acknowledging data and see if there is more to come, if yes, we successfully measured the IW.

Organizing Internet-wide scans

We coordinated our scanning activities with our local IT center and provided a website from the scanning machines explaining the nature of the scans as well as showing how to opt out. Furthermore, our HTTP scanner explained the nature of the scan in the user-agent header and reverse DNS entries hinted at the nature of the data.

Initial window configurations in 2017

Not surprisingly, our scans revealed (Figure 2) the general slow adoption of new Internet standards. Namely, even though the use of IW 10 was recommended by the IETF nearly five years ago, we still find many systems using older recommendations (for example, IW 2 and IW 4). Here, by just using more recent versions of operating systems, many systems could benefit from larger IWs.

Figure 2: TCP Initial Window Configurations in IPv4. IWs are dominated by RFC recommended values. Services using HTTP tend to better follow current RFC recommendations. Furthermore, our results show that scanning 100% or randomly 1% of IPv4 makes no practical differences to show its general distribution.

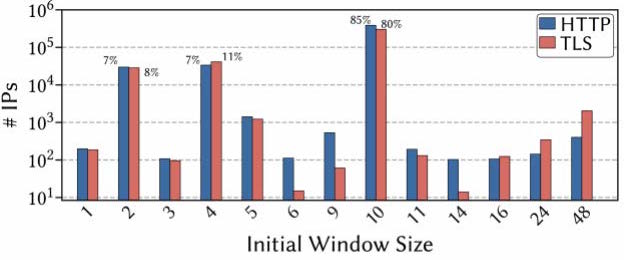

When we limit our view to the Alexa Top 1 million list (Figure 3) the picture changes. Operators hosting such popular sites tend to keep their systems much more up to date as suggested by over 80% IW 10 adoption.

Figure 3: TCP Initial Window Configurations in the Alexa Top 1 million list (note the log scale). IWs of these ‘popular’ services tend to be more up to date on recommended values, we can also see some degree of customization.

Overall, the main result of our study is a rather service dependent IW configuration. We found that many of the older IW values are present at legacy devices, for example, home gateways in ISP access networks. We also noted a certain degree of customization, for example, indicated by non-standard IWs which we mostly found in typical server networks. For example, Akamai enables per-service and even per-customer specific IW configurations. Since these services are virtualized, analyzing such service (host)-specific configurations requires prior knowledge to present valid host names/URLs—a setting our generalized methodology intentionally avoids to be applicable to the Internet at large.

Continuous measurements

To continuously monitor IW trends in the Internet, we regularly probe 1% (at random) of the IPv4 address space and publish the finding on our project’s website: https://iw.comsys.rwth-aachen.de/

We believe that this enables informed standardization decisions by shedding light on current operational practices and can further help operators to monitoring general Internet trends.

Thanks to Oliver Hohlfeld who co-authored this post.

Jan Rüth is a PhD student at the Chair for Communication and Distributed Systems at RWTH Aachen University in Germany. Oliver Hohlfeld is a researcher at the same institute leading the Network Architectures group.

The views expressed by the authors of this blog are their own and do not necessarily reflect the views of APNIC. Please note a Code of Conduct applies to this blog.

Interesting research. Is there any variance between ipv4 and ipv6?

fyi, the email from apnic about this blog entry has many links that are for last year, so the audience could be reduced.

Interesting point! We did not measure v6. In theory, no difference should exist since the IE is configured at the TCP layer. However, in practice the IW distribution of v6 servers could be skewed towards more modern values (e.g. 10) due to the likely absence of old/legacy systems.