Telcos deal with a considerable amount of multivendor devices. Although many hope/expect that these are equipped with state-of-the-art telemetry technologies, most of the time they’re not. For this reason, legacy protocols such as Simple Network Management Protocol (SNMP) are still relevant and concepts such as standardized data modelling are not widely adopted.

However, within this conservative context, some new telemetry technologies are emerging and their adoption by important Internet Service Providers (AT&T, Comcast, Verizon, BT, Deutsche Telekom, Swisscom …) are making them a de facto standard in the world of network telemetry.

Recently, I’ve been tasked with taking a closer look at some of these technologies as part of a project to develop an application based on the gRPC (a recursive acronym for gRPC Remote Procedure Call) framework to collect data modelled via YANG from a multivendor network.

In this post, I’ll provide a brief introduction to some of these technologies I’ve come across and describe the core features of the gRPC dial-out collector we’ve developed.

What is Model-Driven Telemetry?

The goal of Model-Driven Telemetry (MDT) is to assist network operators with choosing the right technologies to improve the way they monitor and automate their networks. For example, MDT uses data PUSH instead of the traditional PULL model to improve scalability.

MDT also helps make sure that the network data is standardized across different vendors using modelling languages such as YANG. Essentially without competitors, YANG is the data modelling language chosen by the telcos to describe network devices’ configurations and states.

This information, modelled via YANG, transits through the network using protocols such as NETCONF/RESTCONF and is encoded using XML/JSON. The protocol operations are performed as Remote Procedure Calls (RPCs) and the data sent/received by the devices is carried over SSH/HTTPS.

In 2016, Google released a new RPC framework called gRPC, which, together with NETCONF/RESTCONF, has been adopted by all major vendors to send/receive data from the network.

Compared to NETCONF/RESTCONF, gRPC is:

- Generally faster to develop with. It uses protocol buffers as the Interface Description Language and an ad-hoc compiler to ‘automagically‘ generate the associated skeleton code.

- Supportive of multiple programming languages out-of-the-box and gives you the freedom to choose the one which fits best your skills, independently from the existing implementations (the Protobuff file is predefining the specs for both client and server).

- Efficient and scalable. It takes advantage of the efficiency coming from HTTP/2 and thanks to protocol buffers, the exchanged data is binary encoded, which considerably reduces the message size.

Currently, the main implementations of gRPC are gNMI and gRPC Dial-in/Dial-out.

gNMI (gRPC Network Management Interface) uses the gRPC framework and a standardized protobuff file to implement a solution to fully operate the network.

With gRPC dial-in/dial-out, the data stream is always PUSH(ed) from the router. In the case of dial-in, the connection is initiated by the collector. With dial-out, the connection is initiated by the router. The biggest benefit of dial-in over dial-out is that you’re going to have a single channel usable for both telemetry and router configuration.

Introducing the MDT dial-out collector

The mdt-dialout-collector application we’ve developed uses the gRPC framework to implement a multi-vendor, gRPC dial-out collector and supports Cisco’s gRPC Dial-out .proto file, Juniper’s gRPC Dial-out .proto file and Huawei’s gRPC Dial-out .proto file (see Table 1).

| Libraries / .proto files | License |

| JsonCpp | MIT License |

| librdkafka | BSD 2-Clause License |

| Modern C++ Kafka API | Apache License Version 2.0 |

| libconfig | LGPL v2.1 |

| rapidcsv | BSD-3-Clause license |

| spdlog | MIT License |

| gRPC | BSD 3-Clause License |

| Cisco dial-out .proto | Apache License Version 2.0 |

| Cisco telemetry .proto | Apache License Version 2.0 |

| Junos Telemetry .proto (download section) | Apache License Version 2.0 |

| Huawei dial-out .proto | N/A |

| Huawei telemetry .proto | N/A |

| mdt-dialout-collector | MIT License |

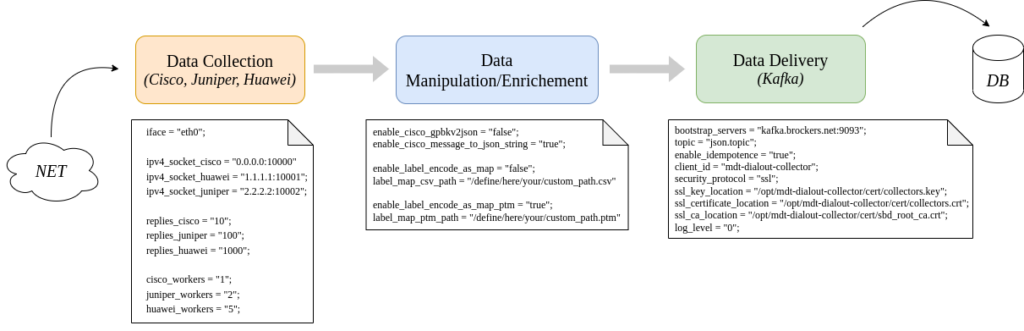

The collector functionalities are logically grouped into three categories and each one of them includes multiple options. The data collection block includes all configuration parameters associated with the behaviour of the daemon, while the data manipulation/enrichment block takes care of conveniently transforming the in-transit data stream to Kafka.

Depending on the selected vendor/operating system, the supported encodings are JSON, GPB-KV and GPB-Compact (Huawei openconfig-interfaces) (Table 2).

| Vendor | OS Version | Encoding | .proto file |

| Cisco | XR (7.4.1@NSC-540) | JSON, GPB-KV | XR Telemetry .proto |

| Cisco | XE (17.06.01prd7@C8000V) | GPB-KV | XR Telemetry .proto |

| Juniper | Junos (20.4R3-S2.6@mx10003) | JSON-GNMI | Junos Telemetry .proto (Download section) |

| Huawei | VRP (V800R021C10SPC300T@NE40E) | JSON | VRP Telemetry .proto |

| Huawei | VRP (V800R021C10SPC300T@NE40E) | GPB-Compact | OpenConfig Interfaces .proto |

Configuration parameters

The default configuration location follows the FHS recommendation, therefore, by default:

- The binary is available at “/opt/mdt-dialout-collector/bin/mdt_dialout_collector”

- The configuration should be available at “/etc/opt/mdt-dialout-collector/mdt_dialout_collector.conf”

However, via the command line, it’s possible to specify an alternative location for the configuration (the chosen flag is -f).

Below is a verbosely commented example of the configuration:

#### mdt-dialout-collector - main

## writer-id:

## default = "mdt-dialout-collector"

writer_id = "mdt-dout-collector-01";

## physical interface where to bind the daemon

iface = "eth0";

## socket dedicated to the cisco's data-stream

ipv4_socket_cisco = "0.0.0.0:10007";

## socket dedicated to the huawei's data-stream

ipv4_socket_huawei = "0.0.0.0:10008";

## socket dedicated to the juniper's data-stream

ipv4_socket_juniper = "0.0.0.0:10009";

## network replies: fine control on the amount of messages received within a single

## session - valid range: "0" < replies < "1000" - (default = "0" => unlimited)

replies_cisco = "10";

replies_juniper = "100";

replies_huawei = "1000";

## workers (threads) per vendor:

## default = "1" | max = "5"

cisco_workers = "1";

juniper_workers = "1";

huawei_workers = "1";

## logging:

## Syslog support:

## default => syslog = "false" | facility (static) default => LOG_USER

syslog = "true";

## Syslog Facility:

## default => syslog_facility = "LOG_USER" | supported [LOG_DAEMON, LOG_USER, LOG_LOCAL(0..7)]

syslog_facility = "LOG_LOCAL3";

## Console support:

## default => console_log = "true"

console_log = "false";

## Severity level:

## default => spdlog_level = "info" | supported [debug, info, warn, error, off]

spdlog_level = "debug";

#### mdt-dialout-collector - data-flow manipulation

## simplified JSON after GPB/GPB-KV decoding:

## default = "true"

enable_cisco_gpbkv2json = "false";

## standard JSON after GPB/GPB-KV deconding:

## default = "false"

enable_cisco_message_to_json_string = "true";

## data-flow enrichment with node_id/platform_id:

## default = "false"

## for additional details refer to "csv/README.md" or "ptm/README.md"

## CSV format:

## default = "false"

enable_label_encode_as_map = "false";

## label_map_csv_path:

## default = "/opt/mdt_dialout_collector/csv/label_map.csv"

label_map_csv_path = "/define/here/your/custom_path.csv"

## PTM format (pmacct's pretag):

## default = "false"

enable_label_encode_as_map_ptm = "true";

## label_map_ptm_path:

## default = "/opt/mdt_dialout_collector/ptm/label_map.ptm"

label_map_ptm_path = "/define/here/your/custom_path.ptm"

#### mdt-dialout-collector - kafka-producer

# https://github.com/edenhill/librdkafka/blob/master/CONFIGURATION.md

bootstrap_servers = "kafka.brockers.net:9093";

topic = "json.topic";

enable_idempotence = "true";

client_id = "mdt-dialout-collector";

# valid options are either plaintext or ssl

security_protocol = "ssl";

ssl_key_location = "/opt/mdt-dialout-collector/cert/collectors.key";

ssl_certificate_location = "/opt/mdt-dialout-collector/cert/collectors.crt";

ssl_ca_location = "/opt/mdt-dialout-collector/cert/sbd_root_ca.crt";

log_level = "0";How to build it

It’s recommended you compile gRPC and all the associated libraries from scratch. The gRPC’s Quick start guide describes, in detail, the compile/install procedure. If you’re running a Debian-derived Linux distribution you can also refer to the Alfanetti documentation.

You’ll also require libraries such as jsoncpp and librdkafka, as below:

Debian$ sudo apt install libjsoncpp-dev librdkafka-dev libconfig++-dev libspdlog-dev

CentOS$ sudo yum install jsoncpp-devel librdkafka-devel libconfig-devel spdlog-develIf you refer to the Alfanetti documentation to compile gRPC, you might want to configure the $PATH variable to include your local “libs/header” folder:

$ export MY_INSTALL_DIR=$HOME/.local

$ export PATH="$MY_INSTALL_DIR/bin:$PATH"On CentOS, you might need to modify the pkg-config path to allow cmake to find all required libraries:

$ export PKG_CONFIG_PATH=$PKG_CONFIG_PATH:/usr/local/lib/pkgconfig/On CentOS the spdlog library should use the external FMT library:

uncomment #define SPDLOG_FMT_EXTERNAL from /usr/include/spdlog/tweakme.h before running the make commandThe git clone, compile and run (compiler version >= gcc-toolset-11) is as follows:

$ cd /opt

$ git clone https://github.com/scuzzilla/mdt-dialout-collector.git

$ cd mdt-dialout-collector

$ mkdir build

$ cmake ../

$ make

$ ./bin/mdt-dialout-collectorIf you have any questions or wish to share your feedback leave a comment below — we’re looking for more contributors.

Adapted from the original post which appeared on Salvatore’s Blog.

Salvatore is an ICT System Engineer at Swisscom.

The views expressed by the authors of this blog are their own and do not necessarily reflect the views of APNIC. Please note a Code of Conduct applies to this blog.