The Transport Layer Security protocol (TLS) is used every minute to secure billions of web and email connections. Since its first specification in 1999 — or the mid-90s, if you count its earlier incarnation as Secure Sockets Layer (SSL) — the protocol has undergone many upgrades, most notably in 2008 (TLS 1.2) and then again in 2018 (TLS 1.3).

For most of its life, studying the deployment of the TLS protocol at an Internet-scale was not a technical possibility. However, in the last ten years, this has changed.

Recently, my colleagues and I at the University of Twente, RWTH Aachen University, University of Sydney, IMDEA Networks, the International Computer Science Institute (ICSI), and the Brandenburg University of Technology presented the results of a multi-year study into the rollout of TLS 1.3 in ACM SIGCOMM’s Best of CCR session (the paper is published in ACM SIGCOMM CCR). What we found can be described as a story of experimentation and centralization.

In this post, I will give a summary of our study and present our thoughts on the future development of open Internet protocols.

- TLS 1.3 has enjoyed considerable and unprecedented support in the wild from the draft stage. The nature of this support was experimental, reflected in rapid update cycles.

- Facebook and Google used their control over both client and server endpoints to experiment with the protocol to ultimately deploy it at scale.

- The early deployment of TLS 1.3 was due to a small number of very large cloud providers, although we also find (later) support in the networks of smaller hosting providers across several ccTLDs.

- The strong centralization of the Internet is also the reason why deployment proceeded at a rapid pace after standardization was complete. However, this was far from uniform across the cloud providers we investigated.

- TLS 1.3 is an example of how Internet technology is developed and refined by a small number of players. We make the case that this can be beneficial as well as dangerous and that we should carefully consider how business incentives and the public interest can be aligned.

How TLS 1.3 came about (and why its deployment is so different)

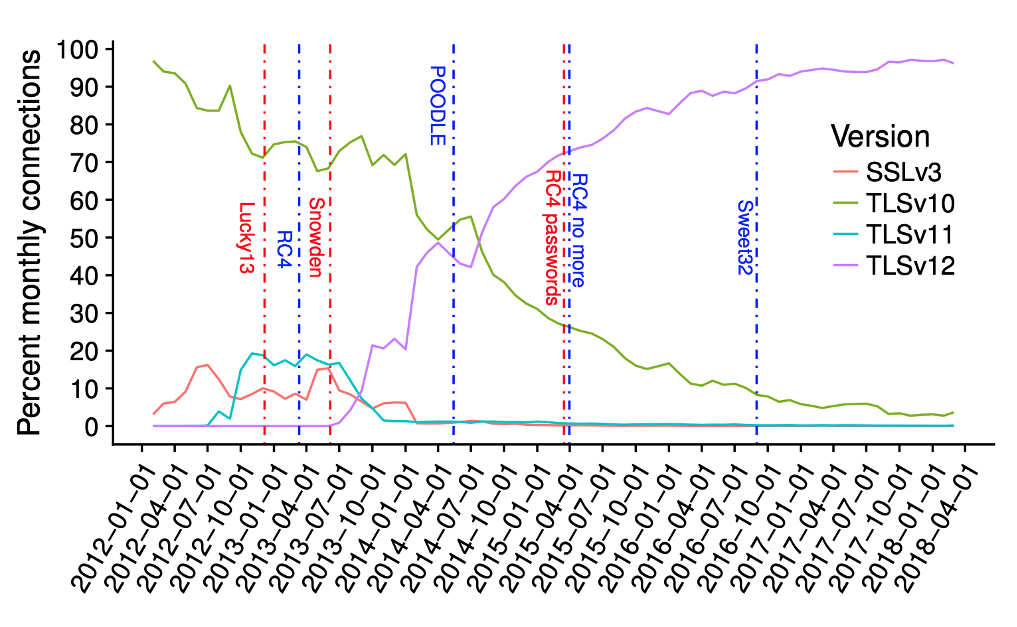

To understand why the deployment of TLS 1.3 was so special, we need to look at the deployment of its predecessors: TLS versions 1.0, 1.1, and 1.2, plus the older SSL 3 (Figure 1). The thing to keep in mind here is that TLS 1.2 was standardized in 2008. But until the second half of 2013, we see almost no connections using it! This changes all of a sudden after the Snowden revelations in June 2013, when browsers and servers switched to more modern cryptography in a hurry. Note how previous attacks on TLS — for example, Lucky 13 and the breakage of RC4 — had an almost negligible effect in comparison.

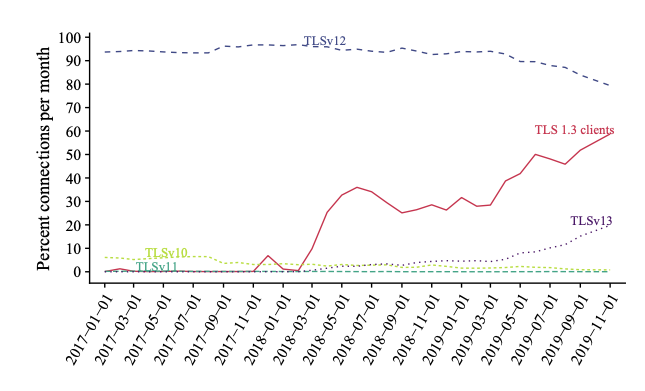

Now contrast this with the deployment of TLS 1.3 and its support in observed TLS connections (Figure 2). The red curve indicates support for TLS 1.3 by clients. The new protocol was standardized in August 2018, but we found support in clients predating that by at least half a year, and some earlier support (later withdrawn) in 2017. Further to this, the first servers began to support it from early 2018.

Deployment trajectory of TLS 1.3

Clearly, TLS 1.3 was different from the start. Independently from each other, researchers from several European, Northern American, and Australian research institutes had begun to collect data on the deployment and use of TLS 1.3 as early as 2017, across both the web and the Android ecosystem of apps. Once we knew about each other’s data, we pooled our resources to paint a more complete picture of what was going on with TLS 1.3.

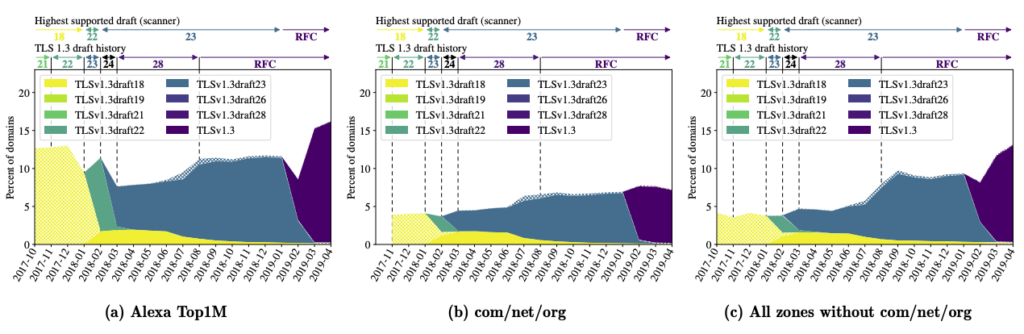

From October 2017, we scanned up to 300M Internet domains on the HTTPS port (at least once a week). Figure 3 (a-c) shows which TLS 1.3 version we found and how often. It is striking that even early drafts (with bugs that were later fixed) were already in use; also how fast the transition from one draft to another happened. We found this not only for the domains on the Alexa Top 1M list but also across all DNS zones we scanned, which includes com/net/org and the new generic top-level domains, plus the top-level domains (TLDs) of more than 50 economies.

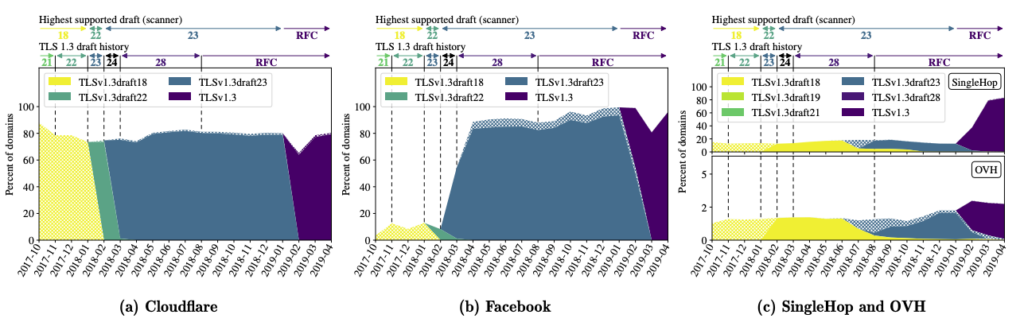

Figure 4 provides some reason for this early adoption — large players, such as Cloudflare, were early adopters. Cloudflare hosts a giant portion of domains, especially those under the new generic TLDs. Google’s and Facebook’s servers, plus smaller cloud providers such as OVH, also supported the new protocol from very early on. Interestingly, Microsoft Azure and Amazon AWS did not support TLS 1.3 even by late 2019, when our scans ended. The providers supporting TLS 1.3 also switched to new drafts fast. Cloudflare made TLS 1.3 the default for the customers in their Free and Pro tiers in late 2016 (but not for the higher tiers, which happened in 2018). Interestingly, the numbers for Cloudflare stayed relatively constant after that, suggesting that their better-paying customers did not opt-in.

Facebook’s case is perhaps even more interesting. Although there were issues with the sample size and bias (refer to our paper), our statistics allowed us to identify how Facebook experimented with the new protocol. From early 2017, the Facebook and Instagram apps used TLS 1.3 versions that identified themselves as a custom version of the drafts. Upon enquiring, we were told that the custom versions were identical to the draft ones except for the version string. This allowed Facebook to run their servers in a kind of dual mode — draft TLS 1.3 for their apps, and normal versions (for example, TLS 1.2) for anyone using their sites in a browser.

TLS 1.3 on a centralized Internet

Our data shows that support for TLS 1.3 on the server-side continued to grow steadily after standardization.

For Alexa-listed domains, for example, it was already around 16 to 17% in May 2019 and grew to around 20% within six months. We found similarly high numbers for the new generic TLDs. Hosting centralization played a big role in this — Cloudflare hosted around 14% of all TLS-enabled domains on the Alexa list in May 2019, and a whopping 60% of all domains with TLS 1.3. In contrast, the next-biggest cloud operator, Google, was responsible for just 11% of TLS 1.3-enabled domains.

For generic TLDs, we found these numbers to be even more impressive. Note, however, that this did not translate to actual connections to these domains — Cloudflare was responsible for a mere 7% of TLS 1.3 connections, showing that most domains in those TLDs received very little traffic.

The strong deployment is much less pronounced in the com/net/org space or in country-level TLDs, where many more operators are responsible for hosting. Interestingly, we found that centralization also exists on a regional level. For example, most domains under Danish, Ukrainian, and Swedish TLDs are not hosted by Cloudflare, but there exist singularly important operators in each economy that host a substantial number of domains. For example, one.com hosts about one-third of Swedish and Danish domains. The provider supports TLS 1.3 — more than 50% of Danish domains have it enabled. We consider this an example of hierarchical centralization.

It was also interesting to see that TLS 1.3 deployment was quite different once you took into account whether a domain was operated by a certain industry or by governments. We checked this for the top 100,000 domains on the Alexa list (where TLS 1.3 deployment was about 27% on average). Deployment was much higher than for sites that we could categorize as entertainment or social, for example, blogs, games and socializing. The adult industry was a particularly keen adopter, with about 45% of adult sites supporting the new protocol.

Unsurprisingly, more conservative industries (banks, finance) and governments were well below average. Around 15% of the former supported TLS 1.3 and around 5% of government sites had made the switch. These numbers are from one year after standardization, so the operators certainly had the time to make changes to their deployment.

Questions for the future

The centralization of the Internet, or for that matter the communication industry, is not new. Open technology tends to become closed over time, with ‘information empires’ building up and rising to power.

We don’t doubt the contributions that giant operators make to Internet technology, be it security protocols such as TLS 1.3, new transport protocols such as QUIC, or TCP congestion algorithms such as BBR. They certainly have the technical means and the data to find optimal parameters or identify deployment issues before rollout begins — our data shows just how extensively they experimented with TLS 1.3. For the security community and the general public, this has been good. We got a very well-tested and secure protocol from the start, and barely anyone noticed the transition.

Yet, looking back at history, we should also ask the question — how will this continue? Could we end up in a situation, maybe, where the development of open protocols is not so open anymore because the experimental data is available only to a few, and corporate incentives and business plans may change such that giant corporations optimize for their use case first? Could we end up with protocols that fortify the positions of a few economic giants but are not optimized for other use cases? Or will a handful of information empires battle it out, similar to the Browser Wars twenty years ago but just by different means?

In his book ‘Master Switch’, Tim Wu describes similar scenarios that have played out like this in the past. Company interests change, CEOs come and go, and shareholder value may eventually dictate corporate directions in surprising ways. Interestingly, Tim Wu also points out that efforts to keep developments closed and rely on monetization models that build on near-monopolies will still often fail. This can translate to innovation within a company becoming much too slow, and the company eventually becoming marginalized by disruptive events on the outside.

It doesn’t have to be like this, of course. TLS 1.3 continues to be developed in the IETF by a good crowd of independent network engineers, academics, and corporations. Ultimately, we think much will depend on the incentives, set by (inter-)governmental policies. If there is a financial reward for corporations to invest in and develop open technology, and maybe even a new business model to be created, their internal incentives may align well with the interests of the public.

Governments are waking up to this and funding new projects that aim at understanding business cases and incentives and finding out how the public interest and corporate interest can be aligned. The Responsible Internet, for example, aims at adding a transparency pillar to the Internet that goes far beyond what we have seen before in efforts like Certificate Transparency. The project idea is to make operators accountable for their technical decisions and even introduce a degree of choice for Internet users. This is flanked by three dedicated efforts that aim at developing business cases, understanding corporate and government incentives, and showcasing the benefit of increased Internet transparency.

Read our paper to learn more about our project and ongoing efforts.

Ralph Holz is an Associate Professor at the University of Twente in the Netherlands.

The views expressed by the authors of this blog are their own and do not necessarily reflect the views of APNIC. Please note a Code of Conduct applies to this blog.

Great work ! Informative article.

Thank you for sharing!