The Domain Name System (DNS) is the glue that holds the Internet together by providing the essential mapping from names to IP addresses. However, over time, the DNS has evolved into a complex and intricate protocol, spread across numerous RFCs. This has made it difficult to write efficient, high-throughput, multithreaded implementations that are bug-free and compliant with RFC specifications.

To assist, my colleagues and I at the University of California, Los Angeles, and Microsoft developed Ferret: A tool that automatically finds RFC compliance bugs in DNS nameserver implementations.

- Ferret uses the Small-scope Constraint-driven Automated Logical Execution (SCALE) approach to guide the search toward different logical behaviours.

- Using Ferret, we identified 30 unique bugs across all DNS implementations, including at least two bugs each in popular implementations like Bind, Knot, NSD, and PowerDNS. One of the bugs is a critical vulnerability in Bind (high-severity rated CVE-2021-25215) that attackers could easily exploit to crash Bind DNS resolvers and nameservers remotely.

- Use of SCALE, in this case, could be extended to finding RFC compliance issues in other protocols.

Existing approaches are not suitable for RFC compliance checking of DNS implementations

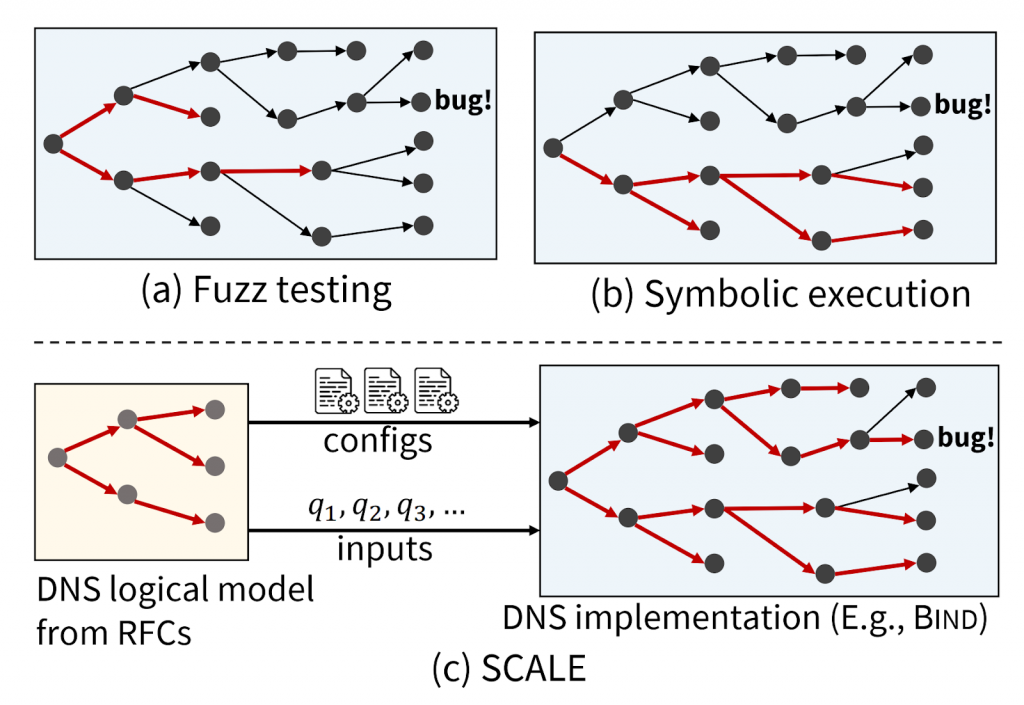

DNS test cases consist of both query and zone files, which are a collection of resource records that specify how the nameserver should handle queries. Zone files are highly structured objects with various syntactic and semantic requirements, and the query must be related to the zone file for the test even to reach the core query resolution logic. As such, existing standard automated test generation approaches are not suitable for RFC compliance checking of DNS implementations as shown in Figure 1 (a) and (b).

Fuzz testing (Figure 1a) is scalable but is often unable to navigate complex input requirements, which are necessary to generate behavioural tests for DNS. As a result, fuzzers for DNS only generate queries and hence are used only to find parsing errors. Symbolic execution (Figure 1b) can solve the issue of input conditions but suffers from path explosion and has difficulty with complex data structures and program logic. Therefore, it will only typically explore a small subset of possible program paths.

SCALE can solve this problem.

The SCALE approach jointly generates zone files and corresponding queries in a way that covers many distinct protocol behaviours, and is applicable to black-box protocol implementations. The key insight behind SCALE is that we can leverage the existence of RFCs to define a logical model of the behaviours of a network protocol and then use this model to guide test generation. With SCALE, we created an executable version of a protocol’s logical semantics and employed symbolic execution on that executable version to generate high-coverage tests that can be executed on any protocol implementation (see Figure 1c) — refer to our paper for more details on how we did this.

Ferret: A tool based on the SCALE approach for DNS nameservers

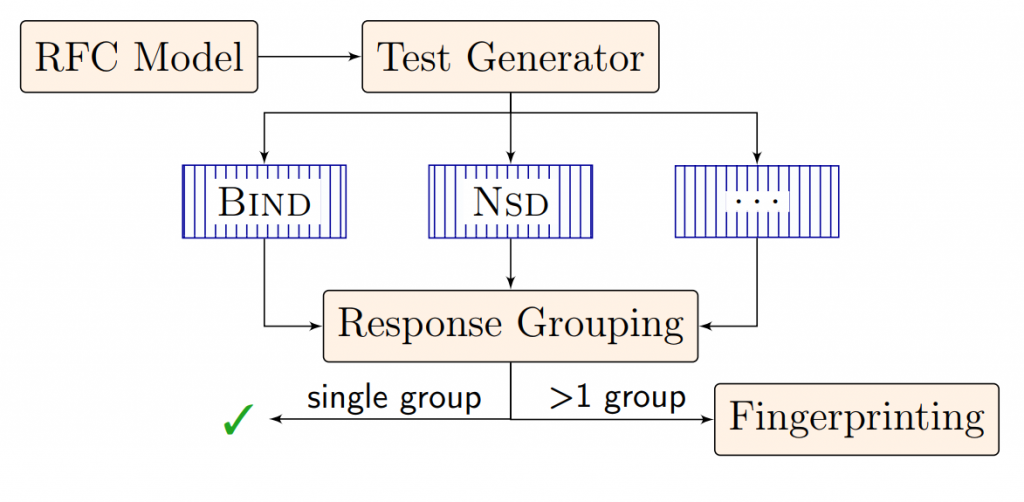

We employed the SCALE approach to build Ferret, which generates tests using a logical model of DNS resolution implemented in a modelling language called Zen that has built-in support for symbolic execution (Figure 2). Ferret performs differential testing by running these tests on multiple DNS nameserver implementations and comparing their results. Differential testing helps to identify implementation-dependent behaviours when RFCs are ambiguous or underspecified.

Ferret generated over 13.5K test cases, of which 62% resulted in some difference among implementations. Since the number of failed tests is high for inspection, we also developed a hybrid fingerprint for each test, which combines information from the test’s path in the Zen model with the results of differential testing, and then grouped tests by fingerprint. The failed tests roughly form 80 groups, from which we identified 30 unique bugs across all the implementations, including at least two bugs each in popular implementations like Bind, Knot, NSD, and PowerDNS.

One of the bugs we found was described by the Bind security team as an “easily-weaponized denial-of-service vector”, which they asked us to keep confidential until they had released a Common Vulnerabilities and Exposure (CVE-2021-25215). The attack affected all maintained Bind versions, which in turn affected Red Hat Enterprise Linux (RHEL), Slackware, Ubuntu, and Infoblox and showed the difficulty in identifying errors in nameservers due to subtle dependencies. Learn more here.

Table 1 shows the implementations tested, the number of bugs found, and how many were critical security vulnerabilities.

| Implementation | Language | Description | Bugs found | Crashes |

| Bind | C | De facto standard | 4 | 1 |

| PowerDNS | C++ | Popular in North Europe | 2 | – |

| NSD | C | Hosts several TLDs | 4 | – |

| Knot | C | Hosts several TLDs | 5 | – |

| CoreDNS | Go | Used in Kubernetes | 6 | 1 |

| YADIFA | C | Created by EURid (.eu) | 3 | – |

| TrustDNS | Rust | Security, safety-focused | 4 | 1 |

| MaraDNS | C | Lightweight server | 2 | – |

We believe our SCALE approach to RFC compliance testing and ‘ferreting’ out bugs could be useful more broadly beyond the DNS. For instance, there are many other complex and distributed protocols used at different network layers, such as routing protocols like BGP and OSPF, flow control protocols like PFC, new transport layer protocols such as QUIC, and many more. It would be interesting future work to apply the SCALE methodology beyond the DNS.

Siva Kesava is a Ph.D. candidate at the Department of Computer Science, UCLA.

Discuss on Hacker NewsThe views expressed by the authors of this blog are their own and do not necessarily reflect the views of APNIC. Please note a Code of Conduct applies to this blog.