The emergence of programmable data planes is having a profound impact on networking, with clear benefits to network operators and switch vendors. For example, network operators are benefitting from increased visibility via fine-grained network telemetry, while switch vendors are finding software development is faster and less expensive than hardware development.

However, the benefits to users are still relatively unexplored, in the sense that today’s programmable data planes offer the same forwarding abstractions that fixed-function devices have always provided, such as match on IP address, decrement TTL, and send to the next hop.

Applications don’t speak TCP/IP

While the Internet is based on a well-motivated design, classic protocols such as TCP/IP provide a lower level of abstraction than modern distributed applications expect, especially in networks managed by a single entity, such as data centres.

As a case in point, today it is common to deploy services in lightweight containers. Address-based routing for containerized services is difficult, because containers deployed on the same host may share an address, and because containers may move, causing its address to change.

Software solutions

To cope with these networking challenges, operators are deploying identifier-based routing, such as Identifier Locator Addressing (ILA). These schemes require that name resolution be performed as an intermediate step.

A second example is load balancing: to improve application performance and reduce server load, data centres rely on complex software systems to map incoming IP packets to one of a set of possible service end-points. Today, this service layer is largely provided by dedicated middleboxes. Examples include Google’s Maglev and Facebook’s Katran.

A third example occurs in big data processing systems, which typically rely on message-oriented middleware, such as TIBCO Rendezvous, Apache Kafka, or IBM’s MQ. This middleware allows for a greater decoupling of distributed components, which in turn helps with fault tolerance and elastic scaling of services.

The current approach provides the necessary functionality: the middleboxes and middleware abstracts away the address-based communication fabric from the application.

However, the impedance mismatch between the abstraction that networks offer and the abstraction that applications need adds complexity to the network infrastructure. Using middleboxes to implement this higher level of network service limits performance, in terms of throughput and latency, as servers process traffic at gigabits per second, while ASICs can process traffic at terabits per second. Moreover, middle-boxes increase operational costs and are a frequent source of network failures.

Given the existence of programmable devices, can’t we do better?

Packet subscriptions

We propose a new network abstraction called packet subscriptions. A packet subscription is a stateful predicate that, when evaluated on an input packet, determines a forwarding decision. Packet subscriptions generalize traditional forwarding rules; they are more expressive than basic equality or prefix matching and can be written on arbitrary, user-defined packet formats.

Packet subscriptions easily express a range of higher-level network services, including pub/sub, in-network caching, and identifier-based routing. In some respects, packet subscriptions share a similar motivation to prior work on content-centric networking.

However, in contrast to this prior work, we are not proposing a complete redesign of the Internet. Instead, we argue that higher-level network abstractions are already used extensively by distributed applications, and this functionality can be naturally provided by the network data plane. Moreover, packet subscriptions can be implemented efficiently in controlled, data centre deployments, in which the entire network is in a single administrative domain, and operators have the freedom to directly tailor the network to the needs of the applications. Packet subscriptions interoperate with other routing schemes, like IP, so they are also suitable for brownfield deployments.

What do packet subscriptions look like?

When end-hosts submit a packet subscription to the global controller, they are effectively saying ‘send me the packets that match this filter’. The following is an example of a filter:

ip.dst == 192.168.0.1It indicates that packets with the IP destination address 192.168.0.1 should be forwarded to the end-host that submitted this filter. One can interpret this filter the traditional way: each host is assigned an IP address, and the switches forward packets toward their destinations.

However, in this traditional interpretation, the network is responsible for assigning IP addresses to end-hosts. Instead, with packet subscriptions, it is the application that assigns IP addresses. In other words, packet subscriptions empower applications to define the routing structure for the network.

Applications can use other attributes for routing, and in particular, they can express their interests by combining multiple conditions on one or more attributes. For example, suppose that a trading application is interested in Nasdaq ITCH messages about Google stock. The following filter matches ITCH messages where the stock field is the constant, and GOOGL and the price field is greater than 50:

stock == GOOGL ∧ price > 50Packet subscriptions may also be stateful — that is, their behaviour may depend on previously processed data packets. To specify a stateful packet subscription, we can use operations on variables in the switch data plane, such as computing a moving average over the value contained in a header field:

stock == GOOGL ∧ avg(price) > 60In addition to checking the stock field, this filter requires that the moving average of the price field exceeds the threshold value 60.

How are packet subscriptions implemented?

Supporting packet subscriptions, as a network-level service, requires addressing a series of challenges. At the network-wide level, the challenge is routing. Analogous to IP, routing on packet subscriptions amounts to placing and possibly combining rules throughout the network so as to induce the right flows of packets from publishers to subscribers.

At the switch-local level, the main problem is forwarding, meaning efficiently matching packets against a set of local rules.

Also at the switch-local level, there are technical challenges inefficiently parsing structured packets and allocating switch memory. To address these challenges, we have designed a new network architecture, named Camus.

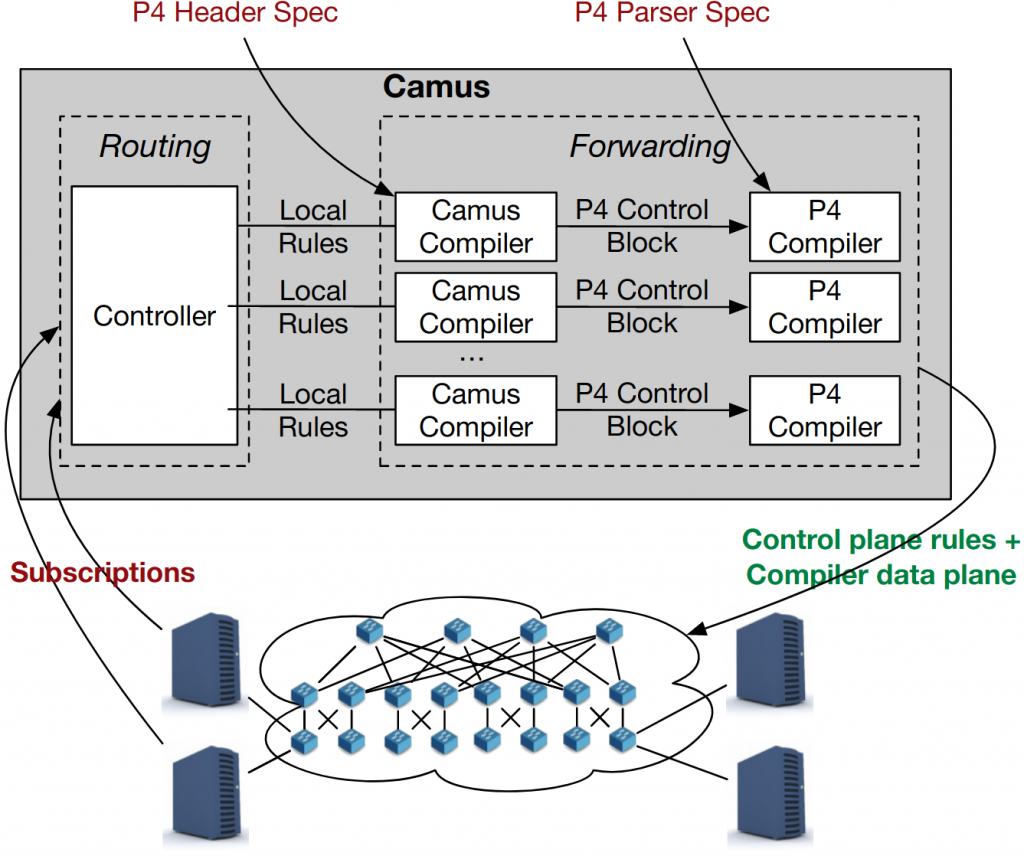

The Camus compiler generates both the data plane configuration and the control plane rules, providing a uniform interface for programming the network:

Applications provide Camus with filters written in a packet subscription language. Camus provides a controller component that determines a global routing policy based on the subscriptions, and a compiler component that generates the control and data plane configurations for the local forwarding decisions that collectively realize the routing strategy.

Efficient forwarding pipeline

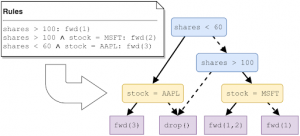

With respect to forwarding, naively translating packet subscriptions into FIB entries would require significant amounts of TCAM and SRAM memory, which is a scarce resource on network hardware. Instead, Camus uses an algorithm based on Binary Decision Diagrams (BDDs). The compiler first converts rules into a BDD representation:

Then, the compiler translates the BDD into a pipeline of P4 tables:

The Camus compiler translates logical predicates into P4 tables that can support O(100k) filter expressions within the limited resources of a programmable switch ASIC. Moreover, Camus provides functionality for parsing application-specific message formats, which requires reading deeply into the packet, and processing messages that have been batched together into a single network packet.

Packet subscriptions are useful to many applications

There is a wide range of applications that can benefit from the expressive forwarding of packet subscriptions. We have implemented a few of them:

- Nasdaq ITCH market data filtering

- Detecting events from streams of In-band network telemetry (INT) data

- Load-balancing web requests to backend servers with Facebook’s ILA

- Video streaming with Cisco’s hICN

- API-compatible replacement for the Apache Kafka message queue

Packet subscriptions reduce latency

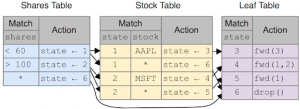

We have evaluated the forwarding efficiency of packet subscriptions using the Camus compiler. We set up an experiment in which we filtered messages from a real trace of ITCH messages from the Nasdaq exchange. As a baseline, the subscriber end-host performed all the filtering. Then, we had the switch perform the filtering. This shows the distribution of the end-to-end latency from the feed publisher to the subscriber to receive the messages of interest:

We can see that the baseline has a large tail latency: 30% of the packets arrive with a latency from 10us all the way to 300us.

When the switch filters the messages, the tail is a lot smaller. The 99th percentile is below 50us. This is because the buffers don’t build-up at the subscriber. The switch can perform the filtering at line rate, and sends exactly the packets that the subscriber wants. Thus, the subscriber does not waste time filtering out the superfluous messages.

Packet subscriptions handle high throughput

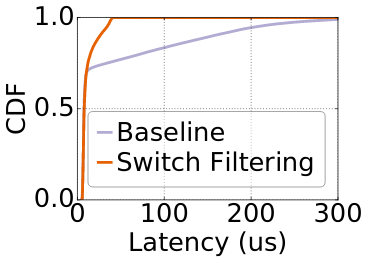

As the number of subscriptions increases, software-based systems can have trouble keeping up with high throughputs. To see this, we performed an experiment for detecting events in a stream of INT data.

We compared Camus to a C program running in user space and a C program using DPDK, both running on a commodity server. To generate a high-bandwidth packet stream, as one would expect when collecting data from a realistic data centre, we used a switch to generate a stream of INT packets on a 100G link. We used filters that match less than 1% of the packets. The filters check that the INT packet passed through a switch with a latency above a threshold, for example:

int.switch_id = 2 and int.hop_latency > 100This is the maximum throughput we saw for an increasing number of filters:

DPDK has better performance than the plain C program, but is fundamentally limited by the CPU clock speed: at 1.6GHz, spending about 100 instructions per packet, DPDK can process 16 Mpps.

Camus, on the other hand, processes the whole 100G stream at line rate. Moreover, we found that the software-based filtering does not scale with the number of filters: the latency for DPDK drastically increases after 10K filters. Camus installs the filters in hardware memory, so it has low latency, regardless of the number of filters.

In conclusion, packet subscriptions provide the abstraction that applications actually use. And, in doing so, it reduces the latency experienced by applications, while being able to handle higher throughput than software based middleware. This is done using network resources efficiently, both in terms of switch memory and traffic.

We invite you to try out packet subscriptions: we have open-sourced our compiler, and have a demo that you can run right from your computer. We also hope that packet subscriptions will foster the emergence of new types of applications that require expressive, low-latency messaging not previously available.

Theo Jepson is currently a Postdoc at Stanford University. Recently, he’s been working on exposing application-level information to the network, in order to leverage programmable data planes to dramatically increase application performance.

The views expressed by the authors of this blog are their own and do not necessarily reflect the views of APNIC. Please note a Code of Conduct applies to this blog.