Network Address Translation (NAT) and Port Address Translation (PAT), in their many forms, are so common these days that almost all of our Voice of Internet Protocol (VoIP) customers use them.

Session Initiation Protocol (SIP)-based VoIP was designed long before NAT was commonplace so it doesn’t work hugely well when NAT is involved. As such, VoIP Service Providers implement several mechanisms to work around the complexities of NAT whilst trying our best not to compromise security.

RTP and NAT

Real-time Transport Protocol (RTP) is just a continuous stream of User Datagram Protocol (UDP) packets from one VoIP device to another. UDP packets have a source IP and port as well as a destination IP and port. These are negotiated between the VoIP devices in SIP and Session Description Protocol (SDP).

When a VoIP device is behind a NAT, the IP and port that it puts in SDP are usually wrong as the NAT router will change these when the RTP packets leave the network. As such, a common mechanism used by VoIP Service Providers is to wait for some RTP packets to be received from the remote VoIP device and use their source IP and port in preference to that sent in SDP.

The RTP+NAT security conundrum

The more observant/mischievous of you might be thinking that the aforementioned mechanism is open to abuse. And you’d be right.

If an attacker were to, for example, flood every UDP port on a VoIP Provider’s media server with RTP then it would theoretically be possible to disrupt, or even redirect, the audio from that server.

A few mechanisms exist to help mitigate this but none of them is a silver bullet. You might think, for example, that you should only accept RTP from the same IP as the SIP came from. However, this may cause issues with more complex networks and when Carrier Grade NAT is in use, you cannot always guarantee that the IP will remain consistent across different flows.

A fairly common mechanism to reduce the attack vector is to pair monitoring, rate limiting and Internet Provider Security (IPS) with some sort of RTP session locking. For example, when you receive three valid RTP packets from a given IP/port you’ll stop accepting RTP packets from another IP/port until the original stream has stopped for a defined period of time.

The headache

Following the implementation of some new media servers that use the above-mentioned RTP session locking mechanism (three packets + a timeout of 10 seconds), a small handful of our customers reported that a few of their calls didn’t have any audio for the first 10 seconds. This was confusing because monitoring showed that RTP was flowing in both directions.

Upon further inspection, it became clear that the RTP source port on the customer’s side was changing after the first three packets were sent. Port changes mid-call are rare but we do see them from time to time as customers’ routers bug out or flip between active/passive devices in a High Availability (HA) pair. However, the way that this issue presented itself across multiple customers didn’t stack up.

Debugging

We managed to sort of replicate the issue by running a softphone behind a Juniper SRX firewall. From this, we could see the UDP source port changed a few times at the start of the call but it stabilized after a few packets and there were no 10 seconds of audio loss, as reported by the customers.

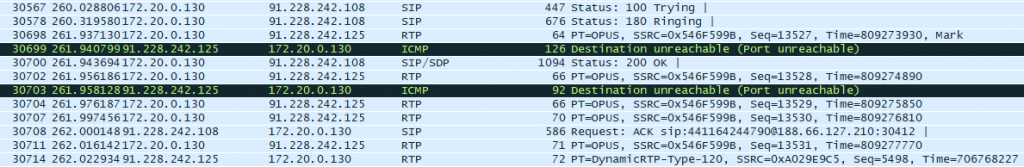

Taking a Wireshark trace (Figure 1) locally on the PC running the softphone showed that the softphone itself was not changing the source port, so it must be the NAT router.

After seeing the ICMP Port Unreachable messages, things started to become a bit clearer. When some NAT devices receive a Port Unreachable, they will tear down the state for that session and the next RTP packet will create a new state (usually from a new source port).

But why was the media server sending ICMP Port Unreachable messages?

As it turned out it was because this particular softphone started sending RTP before the call was fully established (that is before it sends the 200 OK). The media server is not ready to receive these packets so it hasn’t yet opened up that port.

Root cause

The way ports are assigned by NAT/PAT routers differs as per vendor. Some vendors will assign random ports whereas others will always assign the lowest available port.

In the case of the customers who had the reported issue, their routers assigned the lowest available port.

On the whole, this was ok because every time the session got closed by the ICMP message, it was opened again on the same port. But if it just so happens that after the third RTP packet the port that was previously being used was taken by another session on the network… the port would change after the media servers had locked the session. As such, the 10-second timer kicked in and there was no audio for that period of time.

The solution

The best solution was to just disable the ICMP Port Unreachable messages. If they weren’t sent and the early RTP was silently ignored then the problem wouldn’t exist.

The 10 seconds of audio loss also seemed like quite a long time on the rare occasion that ports legitimately change mid-call, since such a long drop in audio is most likely to cause one party to hang up.

This timeout was lowered also to a more acceptable level. Although lowering the timeout does reduce security somewhat, a phone legitimately not sending RTP for 10 seconds whilst an attacker is sending RTP to that same port is reasonably unlikely.

Of course, the increasingly widespread adoption of IPv6 removes the complexities presented by NAT though a lot of the problems we currently attribute to NAT, such as pinhole timeouts, still exist within stateful firewalls.

VoIP is hard 🙁

As a SaaS company, if you employ a strategy of continuous automated testing then you can largely eradicate customer-affecting bugs in production.

VoIP isn’t quite so simple. You have to contend with a huge variety of phone and network vendors and, sometimes, they just don’t play nicely.

Adapted from original post which appeared on Phil’s Blog.

Phil Lavin is an Engineer, currently working for CloudCall.

The views expressed by the authors of this blog are their own and do not necessarily reflect the views of APNIC. Please note a Code of Conduct applies to this blog.