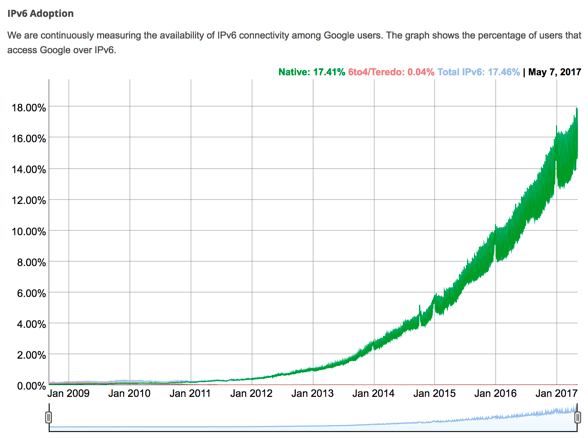

It’s 2017, but I still have to tell people what IPv6 is, how it works, and the benefits of using it instead of IPv4.

Although it has been around for years, it has only been until recently that IPv6 adoption has been gaining pace.

With the Internet of Things becoming more of a reality, Carrier Grade NATs will not be able to accommodate the growing number of devices connecting to the Internet. But there’s no reason to wait for this to happen, given that performance can be 10% to 15% better with IPv6.

As IPv6 matures and becomes the dominant protocol, it’s worth considering how we can manipulate it to take advantage of this huge address space. There are possibilities for exploiting this capability for other purposes, including storing useful information such as state and metadata.

Some ISPs integrate metadata information inside IPv6 address to identify services, clients’ VLAN, VXLAN, departments, locations, and even the port numbers. This information is useful also for applying firewall rules, for routing decisions and, if you do automation with your network, it’s easier to separate and integrate everything when you have metadata in advance.

I remember that when we started to implement IPv6 at Vinted (it was my second IPv6 deployment), we brainstormed what would be the best way to divide this address space to be readable, practical and scalable. Our first iterations were, as usual, over-engineered (too much metadata relied on address itself: service, data centre, location). Eventually, though, we were able to simplify this, including by storing metadata information in IPv6 address via sharding.

Sharding helps in cases when you want to spread your data across more than one node. And to keep this information consistent for read/write operations, it’s crucial to keep state/metadata somewhere for the client to know where to send data. You cannot send read/write requests to random nodes because data is not replicated. This makes IPv6 addresses a suitable place for such metadata.

Below is an example of how to use sharding for three memcached instances. We have three nodes with appropriate addressing where the last four octets are delegated for start:end.

2a02:4780:1:1::0:3e7/64

2a02:4780:1:1::3e8:7cf/64

2a02:4780:1:1::7d0:bb7/64

The first node handles shards from zero to 0x03e7 and so on.

Below is an example of the minimal PoC for you to see how it works (I picked CRC16 algorithm to hash keys):

require 'digest/crc16'

class Sharding

def initialize(nodes, key)

@nodes = nodes

@key = key

@max_slots = max_slots

end

def max_slots

@nodes.each do |node|

octets = node.split(':')

shard_end = octets[octets.size - 1].hex

@max_slots = shard_end if shard_end > @max_slots

end

end

def get_shard

max_slots

@nodes.each do |node|

octets = node.split(':')

shard_start = octets[octets.size - 2].hex

shard_end = octets[octets.size - 1].hex

shard_hex = Digest::CRC16.hexdigest(@key).hex % @max_slots

return node if shard_hex >= shard_start && shard_hex <= shard_end

end

end

end

nodes = [

'2a02:4780:1:1::0:3e7',

'2a02:4780:1:1::3e8:7cf',

'2a02:4780:1:1::7d0:bb7'

]

p Sharding.new(nodes, 'example_key0').get_shard

p Sharding.new(nodes, 'example_key1').get_shard

This produces the following output:

% ruby sharding.rb

"2a02:4780:1:1::7d0:bb7"

"2a02:4780:1:1::0:3e7"

Keep in mind that this method won’t work if you operate SLAAC inside your network, as it relies on static IP addresses or DHCPv6. The best way around this would be to announce /128 from the node (this task could be automated) unless you operate on a L2-only network. As I mentioned above, we need only the last four bytes from an IP address, hence /120 is enough. According to IPv6 addressing architecture, a /64 is given for a host (it doesn’t matter if it’s point-to-point link or end customer), thus everyone is able to implement this approach.

One last point — in practice, there are two ways to implement things: popular or correct. Pick one responsibly.

Donatas Abraitis is a systems engineer at Hostinger.

The views expressed by the authors of this blog are their own and do not necessarily reflect the views of APNIC. Please note a Code of Conduct applies to this blog.

There can be privacy and security implications of embedded metadata in IPv6 IIDs that are worth considering.

https://tools.ietf.org/html/rfc7721

It depends as always how you use it. It doesn’t look different from similar approach storing metadata in DNS TXT records. I think with DNS you expose this information explicitly while with IPv6 you see it as usual IP address.