The DNS is one of the core services of the Internet. Every web page visit requires multiple DNS queries and responses. When it breaks, the DNS ends up on front pages of prominent news sites.

My colleagues and I at SIDN Labs, TU Delft, IE Domain Registry, and USC/ISI, recently came across a DNS vulnerability that can be exploited to create traffic surges on authoritative DNS servers — the ones that know the contents of entire DNS zones such as .org and .com. We first came across it when comparing traffic from The Netherlands’ .nl zone, and New Zealand’s .nz authoritative servers, for a study on Internet centralization.

Under careful examination, we recognized that a known problem was much more serious than anticipated. The vulnerability, which we named tsuNAME, is caused by loops in DNS zones — known as cyclic dependencies — and by bogus resolvers/clients/forwarders. We scrutinize tsuNAME in this research paper.

Importantly, current RFCs do not fully cover the problem. They prevent resolvers from looping, but don’t prevent clients and forwarders from starting to loop, which will then cause the resolver to flood the authoritative servers.

In response to this, we’ve written an Internet draft for the IETF DNS Working Group detailing how to fix it and developed CycleHunter, a tool that can prevent such attacks. Further to this, we’ve carried out responsible disclosure, working with Google and Cisco engineers who mitigated the issue on their public DNS servers.

Below is a brief summary of tsuNAME and our research so far.

What causes the tsuNAME vulnerability?

To understand what causes this vulnerability we need two DNS records to be misconfigured in a loop, such as:

#.com zone example.com NS cat.example.nl #.nl zone example.nl NS dog.example.com

In this example, a DNS resolver that attempts to resolve example.com will be forwarded to the .nl authoritative servers so example.nl can be resolved. The DNS resolver will learn that example.nl authoritative servers are dog.example.com. This causes a loop, so no domains under example.com or example.nl can be resolved.

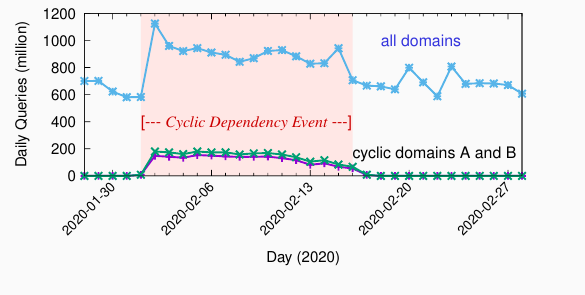

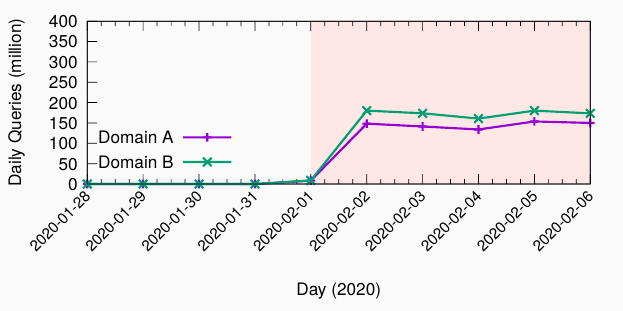

Why is this even a problem? When a user queries this name, their resolvers will follow this loop, amplifying one query into several. Some parts of the DNS resolving infrastructure — clients, resolvers, forwarders, and stub resolvers — cannot cope with it. The result may look like Figure 1. This example shows two domains under the .nz zone, that previously had barely any traffic, starting to receive more than 100m queries a day, each.

In this example, .nz operators fixed the loop in their zones and the problem was mitigated. In the meantime, total .nz traffic increased by 50% (Figure 2) due to just two bogus domain names.

Now, what would happen if an attacker holds many domain names and misconfigures them with such loops? Or if an attacker makes many requests? In these cases, a few queries can be amplified, perhaps overwhelming the authoritative servers. This risks a new amplification threat.

Why does this traffic surge occur?

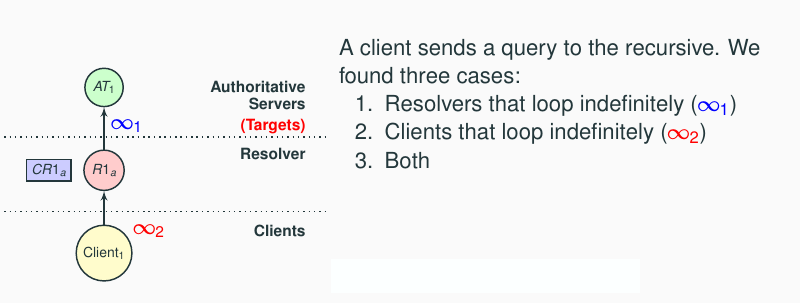

We found three root causes behind tsuNAME surges:

- Old resolvers (such as MS Windows 2008 DNS server) that loop indefinitely

- Clients/forwarders that loop indefinitely — they are not happy with SERVFAIL responses returned by resolvers (Figure 3)

- Both of the above

This may all seem rather obvious, but it has not been considered at the IETF level according to the following relevant RFCs:

- RFC1034 says resolvers should bound the amount of work ‘to avoid infinite loops’.

- RFC1035 (§7.2) says that resolvers should have ‘a per-request counter to limit work on a single request’.

- RFC1536 says loops can occur but offers no new solution.

As you can see, current RFCs only prevent resolvers from looping. But what happens if the loop occurs outside resolvers?

Imagine a client looping, sending new queries every second to the resolver, after receiving SERVFAIL responses from the resolver (Figure 3). By following the current RFCs, every new query would trigger a new set of queries from the resolvers to the authoritative servers, even if they are limited.

This is what Google’s Public DNS experienced in February 2020. As we saw in our previous study on Internet centralization, Google’s Public DNS sent far more A/AAAA queries to .nz compared to .nl. They followed the RFCs, and yet looping clients caused GDNS to send large volumes of queries to the .nz authoritative servers.

Fixing the tsuNAME problem

A fix for tsuNAME depends on the part of the infrastructure in which you are located.

For resolver operators, the solution is simple: Resolvers should cache these looping records, so every new query sent to clients/forwarders and stubs can be directly answered from cache, protecting authoritative servers. That’s what we proposed in this IETF draft, and it’s how Google Public DNS fixed it.

Authoritative server operators should make sure there are no misconfigurations in zone files. To help with this, we have developed CycleHunter, an open-source tool that can detect such loops in DNS zones. We suggest operators regularly check their zones against loops.

Responsible disclosure

We were able to reproduce tsuNAME in various scenarios and from various vantage points, including RIPE Atlas and a sinkhole. We found more than 3,600 resolvers from 2,600 Autonomous Systems that were vulnerable to tsuNAME. Check our paper for details.

We notified Google, Cisco, and multiple other parties in early 2021. We gave all parties enough time to fix their bugs and then publicly disclosed tsuNAME on 6 May 2021.

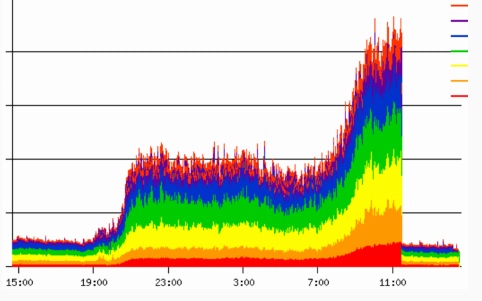

After the disclosure, several TLD operators let us know they were victims of tsuNAME related events in the past. One of them was kind enough to share data on their traffic (Figure 4). This is an EU-based ccTLD operator that saw 10x more traffic during a tsuNAME event:

What’s next?

We’ve shown that despite being well known, loops in DNS zones are still a problem and current RFCs do not fully cover it.

We will continue to work on a revised version of our Internet draft and incorporate feedback from the community. Regardless of what happens to the draft, we are happy to have helped Google and Cisco to mitigate their public DNS servers against this vulnerability, helping to make the Internet slightly safer.

Contributors: Sebastian Castro, John Heidemann, Wes Hardaker

Giovane Moura is a Data Scientist with SIDN Labs.

The views expressed by the authors of this blog are their own and do not necessarily reflect the views of APNIC. Please note a Code of Conduct applies to this blog.